Author Archives: Ivan Pepelnjak

Author Archives: Ivan Pepelnjak

One of the publicly observable artifacts of the October 2021 Facebook outage was an intricate interaction between BGP routing and their DNS servers needed to support optimal anycast configuration. Not surprisingly, it was all networking engineers’ fault according to some opinions1

There’s no need for anycast2/BGP advertisement for DNS servers. DNS is already highly available by design. Only network people never understand that, which leads to overengineering.

It’s not that hard to find a counter-argument3: while it looks like there are only 13 root name servers4, each one of them is a large set of instances advertising the same IP prefix5 to the Internet.

It looks like netsim-tools reached a somewhat stable state, so it was time to do a cleanup and publish release 1.0 (also available on PyPi, use pip3 install –upgrade netsim-tools to fetch it).

During the cleanup, I removed all references to the obsolete scripts, leaving only the netlab command. I also found an old bash script that enabled LLDP passthrough on Linux bridges and made it part of netlab up process; your libvirt-based labs will have LLDP enabled by default.

Interested? Install the tools and follow the tutorials to get started.

It looks like netsim-tools reached a somewhat stable state, so it was time to do a cleanup and publish release 1.0 (also available on PyPi, use pip3 install –upgrade netsim-tools to fetch it).

During the cleanup, I removed all references to the obsolete scripts, leaving only the netlab command. I also found an old bash script that enabled LLDP passthrough on Linux bridges and made it part of netlab up process; your libvirt-based labs will have LLDP enabled by default.

Interested? Install the tools and follow the tutorials to get started.

Long long time ago (seven years to be precise), ISOC naively tried to bridge the gap between network operators and Internet Vendor Engineering Task Force1. They started with a widespread survey asking operators why they’re hesitant to participate in IETF mailing lists and meetings.

The result: Operators and the IETF draft that never moved beyond -00 version. A quick glimpse into the Potential Challenges will tell you why IETF preferred to kill the messenger (and why I published this blog post on Halloween).

Long long time ago (seven years to be precise), ISOC naively tried to bridge the gap between network operators and Internet Vendor Engineering Task Force1. They started with a widespread survey asking operators why they’re hesitant to participate in IETF mailing lists and meetings.

The result: Operators and the IETF draft that never moved beyond -00 version. A quick glimpse into the Potential Challenges will tell you why IETF preferred to kill the messenger (and why I published this blog post on Halloween).

Just FYI: if you’re wondering about the wisdom of every networking engineer should become a programmer religion, you might benefit from the Programming Sucks reality check. I had just enough exposure to programming to realize how spot-on it is (and couldn’t decide whether to laugh or cry).

Just FYI: if you’re wondering about the wisdom of every networking engineer should become a programmer religion, you might benefit from the Programming Sucks reality check. I had just enough exposure to programming to realize how spot-on it is (and couldn’t decide whether to laugh or cry).

We have school holidays this week, so I’m reposting wonderful comments that would otherwise be lost somewhere in the page margins. Today: Minh Ha on recent Facebook failure and overly complex systems (slightly edited).

I incidentally commented on your NSF post some 3 weeks before […the Facebook outage…] happened, on the unpredictable nature of nonlinear effects resulting from optimization-induced complexity. Their outage just drives home the point that optimization is a dumb process and leads to combinations of circular dependency that no one can account for and test.

We have school holidays this week, so I’m reposting wonderful comments that would otherwise be lost somewhere in the page margins. Today: Minh Ha on recent Facebook failure and overly complex systems (slightly edited).

I incidentally commented on your NSF post some 3 weeks before […the Facebook outage…] happened, on the unpredictable nature of nonlinear effects resulting from optimization-induced complexity. Their outage just drives home the point that optimization is a dumb process and leads to combinations of circular dependency that no one can account for and test.

We have school holidays this week, so I’m reposting wonderful comments that would otherwise be lost somewhere in the page margins. Today: Erik Auerswald’s excellent summary of BFD, NSF, and GR.

I’d suggest to step back a bit and consider the bigger picture: What is BFD good for? What is GR/NSF/NSR/SSO good for?

BFD and GR/NSF/NSR/SSO have different goals: one enables quick fail over, the other prevents fail over. Combining both promises to be interesting.

We have school holidays this week, so I’m reposting wonderful comments that would otherwise be lost somewhere in the page margins. Today: Erik Auerswald’s excellent summary of BFD, NSF, and GR.

I’d suggest to step back a bit and consider the bigger picture: What is BFD good for? What is GR/NSF/NSR/SSO good for?

BFD and GR/NSF/NSR/SSO have different goals: one enables quick fail over, the other prevents fail over. Combining both promises to be interesting.

We have school holidays this week, so I’m reposting wonderful comments that would otherwise be lost somewhere in the page margins. Today: Minh Ha on complexity of emulating layer-2 networks with VXLAN and EVPN.

Dmytro Shypovalov is a master networker who has a sophisticated grasp of some of the most advanced topics in networking. He doesn’t write often, but when he does, he writes exceptional content, both deep and broad. Have to say I agree with him 300% on “If an L2 network doesn’t scale, design a proper L3 network. But if people want to step on rakes, why discourage them.”

We have school holidays this week, so I’m reposting wonderful comments that would otherwise be lost somewhere in the page margins. Today: Minh Ha on complexity of emulating layer-2 networks with VXLAN and EVPN.

Dmytro Shypovalov is a master networker who has a sophisticated grasp of some of the most advanced topics in networking. He doesn’t write often, but when he does, he writes exceptional content, both deep and broad. Have to say I agree with him 300% on “If an L2 network doesn’t scale, design a proper L3 network. But if people want to step on rakes, why discourage them.”

We have school holidays this week, so I’m reposting wonderful comments that would otherwise be lost somewhere in the page margins. Today: Dmitry Perets on the interactions between BFD and GR.

Well, assuming that the C-bit is set honestly (will be funny if not) and assuming that the Helper is using this bit correctly (and I think it’s pretty well defined what “correctly” means - see section 4.3 in RFC 5882), the answer is pretty clear.

We have school holidays this week, so I’m reposting wonderful comments that would otherwise be lost somewhere in the page margins. Today: Dmitry Perets on the interactions between BFD and GR.

Well, assuming that the C-bit is set honestly (will be funny if not) and assuming that the Helper is using this bit correctly (and I think it’s pretty well defined what “correctly” means - see section 4.3 in RFC 5882), the answer is pretty clear.

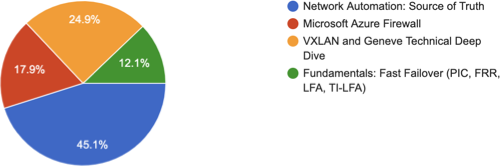

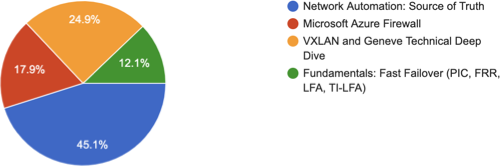

A few weeks ago I asked my subscribers which webinar they’d like to see in November (thanks a million to everyone who replied!). Not surprisingly, network automation got the top spot, but I was a bit sad to see my long-term pet project at the bottom of the list:

A few weeks ago I asked my subscribers which webinar they’d like to see in November (thanks a million to everyone who replied!). Not surprisingly, network automation got the top spot, but I was a bit sad to see my long-term pet project at the bottom of the list:

In case you’re ever asked to justify an investment in network automation, read How to Make the Case for Automation Architecture first. Not surprisingly, it includes the evergreen what problem are you trying to solve?

In case you’re ever asked to justify an investment in network automation, read How to Make the Case for Automation Architecture first. Not surprisingly, it includes the evergreen what problem are you trying to solve?

Network validation is becoming another overhyped buzzword with many opinionated pundits talking about it and few environments using it in practice (why am I not surprised?)

As always, there are exceptions. They don’t have to be members of the FAANG club, and some of them get the job done with open-source tools regardless of what vendor marketers would like you to believe. For example, Donatas Abraitis described how the Hostinger networking team gradually implemented network validation using Cumulus VX, Vagrant, SuzieQ, PyTest and Test Kitchen. Enjoy!