Repetition Without Repetition

I just finished spending a wonderful week at Cisco Live EMEA and getting to catch up with some of the best people in the industry. I got to chat with trainers like Orhan Ergun and David Bombal and see how they’re continuing to embrace the need for people in the networking community to gain knowledge and training. It also made me think about a concept I recently heard about that turns out to be a perfect analogy to my training philosophy even though it’s almost 70 years old.

Practice Makes Perfect

Repetition without repetition. The idea seems like a tautology at first. How can I repeat something without repeating it. I’m sure that the people in 1967 that picked up the book by Soviet neurophysiologist Nikolai Aleksandrovitsch Bernstein were just as confused. Why should you do things over and over again if not to get good at performing the task or learning the skill?

The key in this research from Bernstein lay in how the practice happens. In this particular case he looked at blacksmiths to see how they used hammers to strike the pieces they were working on. The most accurate of his test subjects didn’t just perform the Continue reading

A Handy Acronym for Troubleshooting

While I may be getting further from my days of being an active IT troubleshooter it doesn’t mean that I can’t keep refining my technique. As I spend time looking back on my formative years of doing troubleshooting either from a desktop perspective or from a larger enterprise role I find that there were always a few things that were critical to understand about the issues I was facing.

Sadly, getting that information out of people in the middle of a crisis wasn’t always super easy. I often ran into people that were very hard to communicate with during an outage or a big problem. Sometimes they were complicit because they made the mistake that caused it. They also bristled at the idea of someone else coming to fix something they couldn’t or wouldn’t. Just as often I ran into people that loved to give me lots of information that wasn’t relevant to the issue. Whether they were nervous talkers or just had a bad grasp on the situation it resulted in me having to sift through all that data to tease out the information I needed.

The Method

Today, as I look back on my career I would like Continue reading

Painless Progress with My Ubiquiti Upgrade

I’m not a wireless engineer by trade. I don’t have a lab of access points that I’m using to test the latest and greatest solutions. I leave that to my friends. I fall more in the camp of having a working wireless network that meets my needs and keeps my family from yelling at me when the network is down.

Ubiquitous Usage

For the last five years my house has been running on Ubiquiti gear. You may recall I did a review back in 2018 after having it up and running for a few months. Since then I’ve had no issues. In fact, the only problem I had was not with the gear but with the machine I installed the controller software on. Turns out hard disk drives do eventually go bad and I needed to replace it and get everything up and running again. Which was my intention when it went down sometime in 2021. Of course, life being what it is I deprioritized the recovery of the system. I realized after more than a year that my wireless network hadn’t hiccuped once. Sure, I couldn’t make any changes to it but the joy of having a stable environment Continue reading

Back On Track in 2024

It’s time to look back at my year that was and figure out where this little train jumped off the rails. I’ll be the first to admit that I ran out of steam chugging along toward the end of the year. My writing output was way down for reasons I still can’t quite figure out. Everything has felt like a much bigger task to accomplish throughout the year. To that end, let’s look at what I wanted to do and how it came out:

- Keeping Track of Things: I did a little bit better with this one, aside from my post schedule. I tried to track things much more and understand deadlines and such. I didn’t always succeed like I wanted to but at least I made the effort.

- Creating Evergreen Content: This one was probably a miss. I didn’t create nearly as much content this year as I have in years past. What little I did create sometimes felt unfocused and less impactful. Part of that has to do with the overall move away from written content to something more video and audio focused. However, even my other content like Tomversations was significantly reduced this year. I will Continue reading

Production Reductions

You’ve probably noticed that I haven’t been writing quite as much this year as I have in years past. I finally hit the wall that comes for all content creators. A combination of my job and the state of the industry meant that I found myself slipping off my self-appointed weekly posting schedule more and more often in 2023. In fact, there were several times I skipped a whole week to get put something out every other week, especially in the latter half of the year.

I’ve always wanted to keep the content level high around here and give my audience things to think about. As the year wore on I found myself running out of those ideas as portions of the industry slowed down. If other people aren’t getting excited about tech why should I? Sure, I could probably write about Wi-Fi 7 or SD-WAN or any number of topics over and over again but it’s harder to repeat yourself for an audience that takes a more critical eye to your writing than it is for someone that just wants to churn out material.

My Bruce Wayne job kept me busy this year. I’m proud of all the content Continue reading

Routing Through the Forest of Trees

Some friends shared a Reddit post the other day that made me both shake my head and ponder the state of the networking industry. Here is the locked post for your viewing pleasure. It was locked because the comments were going to devolve into a mess eventually. The person making the comment seems to be honest and sincere in their approach to “layer 3 going away”. The post generated a lot of amusement from the networking side of IT about how this person doesn’t understand the basics but I think there’s a deeper issue going on.

Trails To Nowhere

Our visibility of the state of the network below the application interface is very general in today’s world. That’s because things “just work” to borrow an overused phrase. Aside from the occasional troubleshooting exercise to find out why packets destined for Azure or AWS are failing along the way when is the last time you had to get really creative in finding a routing issue in someone else’s equipment? We spend more time now trying to figure out how to make our own networks operate efficiently and less time worrying about what happens to the packets when they leave our organization. Continue reading

Asking The Right Question About The Wireless Future

It wasn’t that long ago that I wrote a piece about how Wi-Fi 6E isn’t going to move the needle very much in terms of connectivity. I stand by my convictions that the technology is just too new and doesn’t provide a great impetus to force users to upgrade or augment systems that are already deployed. Thankfully, someone at the recent Mobility Field Day 10 went and did a great job of summarizing some of my objections in a much simpler way. Thanks to Nick Swiatecki for this amazing presentation:

He captured so many of my hesitations as he discussed the future of wireless connectivity. And he managed to expand on them perfectly!

New Isn’t Automatically Better

Any time I see someone telling me that Wi-Fi 7 is right around the corner and that we need to see what it brings I can’t help but laugh. There may be devices that have support for it right now, but as Nick points out in the above video, that’s only one part of the puzzle. We still have to wait for the clients and the regulatory bodies to catch up to the infrastructure technology. Could you imagine if we did the same Continue reading

AI Is Making Data Cost Too Much

You may recall that I wrote a piece almost six years ago comparing big data to nuclear power. Part of the purpose of that piece was to knock the wind out of the “data is oil” comparisons that were so popular. Today’s landscape is totally different now thanks to the shifts that the IT industry has undergone in the past few years. I now believe that AI is going to cause a massive amount of wealth transfer away from the AI companies and cause startup economics to shift.

Can AI Really Work for Enterprises?

In this episode of Packet Pushers, Greg Ferro and Brad Casemore debate a lot of topics around the future of networking. One of the things that Brad brought up that Greg pointed out is that data being used for AI algorithm training is being stored in the cloud. That massive amount of data is sitting there waiting to be used between training runs and it’s costing some AI startups a fortune in cloud costs.

AI algorithms need to be trained to be useful. When someone uses ChatGPT to write a term paper or ask nonsensical questions you’re using the output of the GPT training run. Continue reading

Does Automation Require Reengineering?

During Networking Field Day 33 this week we had a great presentation from Graphiant around their solution. While the presentation was great you should definitely check out the videos linked above, Ali Shaikh said something in one of the sessions that resonated with me quite a bit:

Automation of an existing system doesn’t change the system.

Seems simple, right? It belies a major issue we’re seeing with automation. Making the existing stuff run faster doesn’t actually fix our issues. It just makes them less visible.

Rapid Rattletraps

Most systems don’t work according to plan. They’re an accumulation of years of work that doesn’t always fit well together. For instance, the classic XKCD comic:

When it comes to automation, the idea is that we want to make things run faster and reduce the likelihood of error. What we don’t talk about is how each individual system has its own quirks and may not even be a good candidate for automation at any point. Automation is all about making things work without intervention. It’s also dependent on making sure the process you’re trying to automate is well-documented and repeatable in the first place.

How many times have you seen or heard of Continue reading

Victims of Success

It feels like the cybersecurity space is getting more and more crowded with breaches in the modern era. I joke that on our weekly Gestalt IT Rundown news show that we could include a breach story every week and still not cover them all. Even Risky Business can’t keep up. However, the defenders seem to be gaining on the attackers and that means the battle lines are shifting again.

Don’t Dwell

A recent article from The Register noted that dwell times for detection of ransomware and malware hav dropped almost a full day in the last year. Dwell time is especially important because detecting the ransomware early means you can take preventative measures before it can be deployed. I’ve seen all manner of early detection systems, such as data protection companies measuring the entropy of data-at-rest to determine when it is no longer able to be compressed, meaning it likely has been encrypted and should be restored.

Likewise, XDR companies are starting to reduce the time it takes to catch behaviors on the network that are out of the ordinary. When a user starts scanning for open file shares and doing recon on the network you can almost guarantee they’ve Continue reading

The Essence of Cisco and Splunk

You no doubt noticed that Cisco bought Splunk last week for $28 billion. It was a deal that had been rumored for at least a year if not longer. The purchase makes a lot of sense from a number of angles. I’m going to focus on a couple of them here with some alliteration to help you understand why this may be one of the biggest signals of a shift in the way that Cisco does business.

The S Stands for Security

Cisco is now a premier security company now. The addition of the most power SIEM on the market means that Cisco’s security strategy now has a completeness of vision. SecureX has been a very big part of the sales cycle for Cisco as of late and having all the parts to make it work top to bottom is a big win. XDR is a great thing for organizations but it doesn’t work without massive amounts of data to analyze. Guess where Splunk comes in?

Aside from some very specialized plays, Cisco now has an answer for just about everything a modern enterprise could want in a security vendor. They may not be number one in every market but Continue reading

Wi-Fi 6E Won’t Make a Difference

It’s finally here. The vaunted day when the newest iPhone model has Wi-Fi 6E. You’d be forgiven for missing it. It wasn’t mentioned as a flagship feature in the keynote. I had to unearth it in the tech specs page linked above. The trumpets didn’t sound heralding the coming of a new paradigm shift. In fact, you’d be hard pressed to find anyone that even cares in the long run. Even the rumor mill had moved on before the iPhone 15 was even released. If this is the technological innovation we’ve all been waiting for, why does it sound like no one cares?

Newer Is Better

I might be overselling the importance of Wi-Fi 6E just a bit, but that’s because I talk to a lot of wireless engineers. More than a couple of them had said they weren’t even going to bother upgrading to the new USB-C wonder phone unless it had Wi-Fi 6E. Of course, I didn’t do a survey to find out how many of them had 6E-capable access points at home, either. I’d bet the number was 100%. I’d be willing to be the survey of people outside of that sphere looking to buy an iPhone Continue reading

Overcoming the Wall

I was watching a Youtube video this week that had a great quote. The creator was talking about sanding a woodworking project and said something about how much it needed to be sanded.

Whenever you think you’re done, that’s when you’ve just started.

That statement really resonated with me. I’ve found that it’s far too easy to think you’re finished with something right about the time you really need to hunker down and put in extra effort. In running they call it “hitting the wall” and it usually marks the point when your body is out of energy. There’s often another wall you hit mentally before you get there, though, and that’s the one that needs to be overcome with some tenacity.

The Looming Rise

If your brain is like mine you don’t like belaboring something. The mind craves completion and resolution. Once you’ve solved a problem it’s done and finished. No need to continue on with it once you’ve reached a point where it’s good enough. Time to move on to something else that’s new and exciting and a source of dopamine.

However, that feeling of being done with something early on is often a false sense of completion. Continue reading

Networking Is Fast Enough

Without looking up the specs, can you tell me the PHY differences between Gigabit Ethernet and 10GbE? How about 40GbE and 800GbE? Other than the numbers being different do you know how things change? Do you honestly care? Likewise for Wi-Fi 6, 6E, and 7. Can you tell me how the spectrum changes affect you or why the QAM changes are so important? Or do you want those technologies simply because the numbers are bigger?

The more time I spend in the networking space the more I realize that we’ve come to a comfortable point with our technology. You could call it a wall but that provides negative connotations to things. Most of our end-user Ethernet connectivity is gigabit. Sure, there are the occasional 10GbE cards for desktop workstations that do lots of heavy lifting for video editing or more specialized workflows like medical imaging. The rest of the world has old fashioned 1000Mb connections based on 802.3z ratified in 1998.

Wireless is similar. You’re probably running on a Wi-Fi 5 (802.11ac) or Wi-Fi 6 (802.11ax) access point right now. If you’re running on 11ac you might even be connected using Wi-Fi 4 (802.11n) if you’re Continue reading

Argument Farming

The old standard.

I’m no stranger to disagreement with people on the Internet. Most of my popular posts grew from my disagreement with others around things like being called an engineer, being a 10x engineer, and something about IPv6 and NAT. I’ve always tried to explain my reasoning for my positions and discuss the relevant points with people that want to have a debate. I tend to avoid commenting on people that just accuse me of being wrong and tell me I need to grow up or work in the real world.

Buying the Farm

However, I’ve noticed recently that there have been some people in the realm of social media and influencing that have taken to posting so-called hot takes on things solely for the purpose of engagement. It’s less of a discussion and more of a post that outlines all the reasons why a particular thing that people might like is wrong.

For example, it would be like me posting something about how an apple is the dumbest fruit because it’s not perfectly round or orange or how the peel is ridiculous because you can eat it. While there are some opinions and points to be Continue reading

Changing Diapers, Not Lives

When was the last time you heard a product pitch that included words like paradigm shift or disruptive or even game changing? Odds are good that covers the majority of them. Marketing teams love to sell people on the idea of radically shifting the way that they do something or revolutionizing an industry. How often do you feel that companies make something that accomplishes the goal of their marketing hype? Once a year? Once a decade? Of the things that really have changed the world, did they do it with a big splash? Or was it more of a gradual change?

Repetition and Routine

When children are small they are practically helpless. They need to be fed and held and have their diapers changed. Until they are old enough to move and have the motor functions to feed themselves they require constant care. In fact, potty training is usually one of the last things on that list above. Kids can feed themselves and walk places and still be wearing diapers. It’s just one of those things that we do as parents.

Yet, changing diapers represents a task that we usually have no issue with. Sure it’s not the most glamorous Continue reading

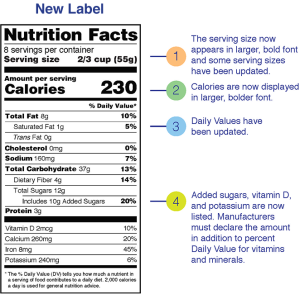

Don’t Let the Cybersecurity Trust Mark Become Like Food Labeling

I got several press releases this week talking about the newest program from the US Federal government for cybersecurity labeling. This program is something designed to help consumers understand how secure IoT devices are and the challenges that can be faced trying to keep your network secure from the large number of smart devices that are being implemented today. Consumer Reports has been pushing for something like this for a while and lauded the move with some caution. I’m going to take it a little further. We need to be very careful about this so it doesn’t become as worthless as the nutrition labels mandated by the government.

Absolute Units

Having labels is certainly better than not having them. Knowing how much sugar a sports drink has is way more helpful than when I was growing up and we had to guess. Knowing where to find that info on a package means I’m not having to go find it somewhere on the Internet1. However, all is not sunshine and roses. That’s because of the way that companies choose to fudge their numbers.

Food companies spent a lot of time trying to work the numbers on those nutrition labels for Continue reading

Cross Training for Career Completeness

Are you good at your job? Have you spent thousands of hours training to be the best at a particular discipline? Can you configure things with your eyes closed and are finally on top of the world? What happens next? Where do you go if things change?

It sounds like an age-old career question. You’ve mastered a role. You’ve learned all there is to learn. What more can you do? It’s not something specific to technology either. One of my favorite stories about this struggle comes from the iconic martial artist Bruce Lee. He spent his formative years becoming an expert at Wing Chun and no one would argue he wasn’t one of the best. As the story goes, in 1967 he engaged in a sparring match with a practitioner of a different art and, although he won, he was exhausted and thought things had gone on far too long. This is what encouraged him to develop Jeet Kun Do as a way to incorporate new styles together for more efficiency and eventually led to the development of mixed martial arts (MMA).

What does Bruce Lee have to do with tech? The value of cross training with different tech disciplines Continue reading

My Belated Review of Cisco Live 2023

It’s been a couple of weeks since Cisco Live US 2023 and I’m just now getting around to writing about it. I was thrilled to attend my 18th Cisco Live and it was just the thing I needed to reconnect with the community. The landscape of Cisco Live looks a little different than it has in years past. There are some challenges that are rising that need to be studied and understood before they become bigger than the event itself.

Showstopping Reveals? Or Consistent Improvement?

What was the big announcement from Cisco this year? What was the thing that was said on stage that stopped the presses and got people chattering? Was it a switch? A firewall? Was it a revolutionary new AI platform? Or a stable IP connection to Mars? Do you even know? Or was it more of a discussion of general topics with some technologies brought up alongside them?

In the last few years you may have noticed that the number of huge big announcements coinciding with the big yearly conferences has come down a bit. Rather than having some big news drop the morning of the keynote the big reveals are being given their own time Continue reading

Using AI for Attack Attribution

While I was hanging out at Cisco Live last week, I had a fun conversation with someone about the use of AI in security. We’ve seen a lot of companies jump in to add AI-enabled services to their platforms and offerings. I’m not going to spend time debating the merits of it or trying to argue for AI versus machine learning (ML). What I do want to talk about is something that I feel might be a little overlooked when it comes to using AI in security research.

Whodunnit?

After a big breach notification or a report that something has been exposed there are two separate races that start. The most visible is the one to patch the exploit and contain the damage. Figure out what’s broken and fix it so there’s no more threat of attack. The other race involves figuring out who is responsible for causing the issue.

Attribution is something that security researchers value highly in the post-mortem of an attack. If the attack is the first of its kind the researchers want to know who caused it. They want to see if the attackers are someone new on the scene that have developed new tools and Continue reading