Git Rebase: What Can Go Wrong?

Julia Evans wrote another must-read article (if you’re using Git): git rebase: what can go wrong?

I often use git rebase to clean up the commit history of a branch I want to merge into a main branch or to prepare a feature branch for a pull request. I don’t want to run it unattended – I’m always using the interactive option – but even then, I might get into tight spots where I can only hope the results will turn out to be what I expect them to be. Always have a backup – be it another branch or a copy of the branch you’re working on in a remote repository.

Video: Kubernetes Calico Plugin

November is turning out to be the Month of BGP on my blog. Keeping in line with that theme, let’s watch Stuart Charlton explain the Calico plugin (which can use BGP to advertise the container networking prefixes to the outside world) in the Kubernetes Networking Deep Dive webinar.

Video: Kubernetes Calico Plugin

November is turning out to be the Month of BGP on my blog. Keeping in line with that theme, let’s watch Stuart Charlton explain the Calico plugin (which can use BGP to advertise the container networking prefixes to the outside world) in the Kubernetes Networking Deep Dive webinar.

Open BGP Daemons: There’s So Many of Them

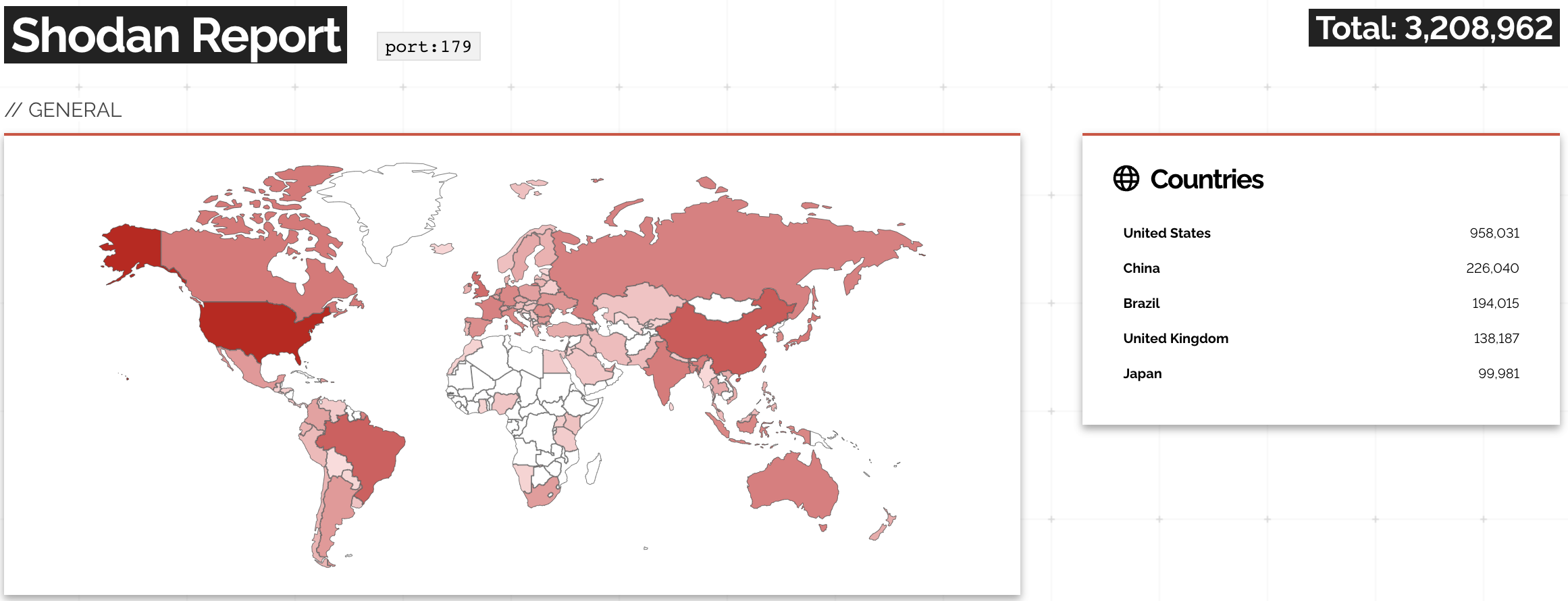

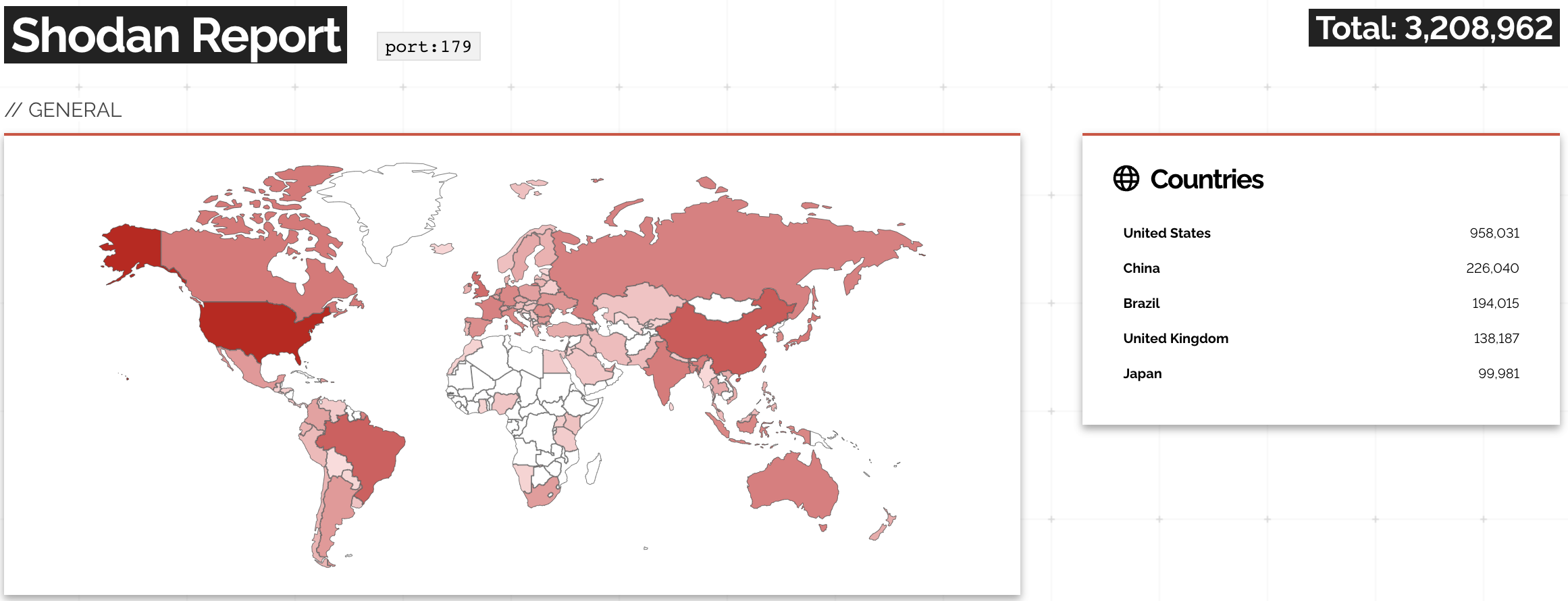

A while ago, the Networking Notes blog published a link to my “Will Network Devices Reject BGP Sessions from Unknown Sources?” blog post with a hint: use Shodan to find how many BGP routers accept a TCP session from anyone on the Internet.

The results are appalling: you can open a TCP session on port 179 with over 3 million IP addresses.

A report on Shodan opening TCP session to port 179

Open BGP Daemons: There’s So Many of Them

A while ago, the Networking Notes blog published a link to my “Will Network Devices Reject BGP Sessions from Unknown Sources?” blog post with a hint: use Shodan to find how many BGP routers accept a TCP session from anyone on the Internet.

The results are appalling: you can open a TCP session on port 179 with over 3 million IP addresses.

A report on Shodan opening TCP session to port 179

Rapid Progress in BGP Route Origin Validation

In 2022, I was invited to speak about Internet routing security at the DEEP conference in Zadar, Croatia. One of the main messages of the presentation was how slow the progress had been even though we had had all the tools available for at least a decade (RFC 7454 was finally published in 2015, and we started writing it in early 2012).

At about that same time, a small group of network operators started cooperating on improving the security and resilience of global routing, eventually resulting in the MANRS initiative – a great place to get an overview of how many Internet Service Providers care about adopting Internet routing security mechanisms.

Rapid Progress in BGP Route Origin Validation

In 2022, I was invited to speak about Internet routing security at the DEEP conference in Zadar, Croatia. One of the main messages of the presentation was how slow the progress had been even though we had had all the tools available for at least a decade (RFC 7454 was finally published in 2015, and we started writing it in early 2012).

At about that same time, a small group of network operators started cooperating on improving the security and resilience of global routing, eventually resulting in the MANRS initiative – a great place to get an overview of how many Internet Service Providers care about adopting Internet routing security mechanisms.

Fibre Channel Addressing

Whenever we talk about LAN data-link-layer addressing, most engineers automatically switch to the “must be like Ethernet” mentality, assuming all data-link-layer LAN framing must somehow resemble Ethernet frames.

That makes no sense on point-to-point links. As explained in Early Data-Link Layer Addressing article, you don’t need layer-2 addresses on a point-to-point link between two layer-3 devices. Interestingly, there is one LAN technology (that I’m aware of) that got data link addressing right: Fibre Channel (FC).

Fibre Channel Addressing

Whenever we talk about LAN data-link-layer addressing, most engineers automatically switch to the “must be like Ethernet” mentality, assuming all data-link-layer LAN framing must somehow resemble Ethernet frames.

That makes no sense on point-to-point links. As explained in Early Data-Link Layer Addressing article, you don’t need layer-2 addresses on a point-to-point link between two layer-3 devices. Interestingly, there is one LAN technology (that I’m aware of) that got data link addressing right: Fibre Channel (FC).

Worth Reading: Confusing Git Terminology

Julia Evans wrote another great article explaining confusing git terminology. Definitely worth reading if you want to move past simple recipes or reminiscing about old days.

Worth Reading: Confusing Git Terminology

Julia Evans wrote another great article explaining confusing git terminology. Definitely worth reading if you want to move past simple recipes or reminiscing about old days.

Video: Hacking BGP for Fun and Profit

At least some people learn from others’ mistakes: using the concepts proven by some well-publicized BGP leaks, malicious actors quickly figured out how to hijack BGP prefixes for fun and profit.

Fortunately, those shenanigans wouldn’t spread as far today as they did in the past – according to RoVista, most of the largest networks block the prefixes Route Origin Validation (ROV) marks as invalid.

Notes:

- ROV cannot stop all the hijacks, but it can identify more-specific-prefixes hijacks (assuming the origin AS did their job right).

- You’ll find more Network Security Fallacies videos in the How Networks Really Work webinar.

Video: Hacking BGP for Fun and Profit

At least some people learn from others’ mistakes: using the concepts proven by some well-publicized BGP leaks, malicious actors quickly figured out how to hijack BGP prefixes for fun and profit.

Fortunately, those shenanigans wouldn’t spread as far today as they did in the past – according to RoVista, most of the largest networks block the prefixes Route Origin Validation (ROV) marks as invalid.

Notes:

- ROV cannot stop all the hijacks, but it can identify more-specific-prefixes hijacks (assuming the origin AS did their job right).

- You’ll find more Network Security Fallacies videos in the How Networks Really Work webinar.

BGP Labs: Build a Transit Network with IBGP

Last time we built a network with two adjacent BGP routers. Now let’s see what happens when we add a core router between them:

BGP Labs: Build a Transit Network with IBGP

Last time we built a network with two adjacent BGP routers. Now let’s see what happens when we add a core router between them:

Worth Reading: Taming the BGP Reconfiguration Transients

Almost exactly a decade ago I wrote about a paper describing how IBGP migrations can cause forwarding loops and how one could reorder BGP reconfiguration steps to avoid them.

One of the paper’s authors was Laurent Vanbever who moved to ETH Zurich in the meantime where his group keeps producing great work, including the Chameleon tool (code on GitHub) that can tame transient loops while reconfiguring BGP. Definitely something worth looking at if you’re running a large BGP network.

Worth Reading: Taming the BGP Reconfiguration Transients

Almost exactly a decade ago I wrote about a paper describing how IBGP migrations can cause forwarding loops and how one could reorder BGP reconfiguration steps to avoid them.

One of the paper’s authors was Laurent Vanbever who moved to ETH Zurich in the meantime where his group keeps producing great work, including the Chameleon tool (code on GitHub) that can tame transient loops while reconfiguring BGP. Definitely something worth looking at if you’re running a large BGP network.

Weird: vJunos Evolved 23.2R1.5 Declines DHCP Address

It’s time for a Halloween story: imagine the scary scenario in which a DHCP client asks for an address, gets it, and then immediately declines it. That’s what I’ve been experiencing with vJunos Evolved release 23.2R1.15.

Before someone gets the wrong message: I’m not criticizing Juniper or vJunos.

- Juniper did a great job releasing a no-hassles-to-download virtual appliance.

- DHCP assignment of management IPv4 address worked with vJunos Evolved release 23.1R1.8

- There were reports that the DHCP assignment process in vJunos Evolved 23.1R1.8 was not reliable, but it worked for me so far, so I’m good to go as long as I can run the older release.

- I might get to love vJunos Evolved. Boot- and configuration times are very reasonable.

However, it looks like something broke in vJunos release 23.2, and it would be nice to figure out what the workaround might be.