ポスト量子暗号が一般利用可能に

過去12か月間、当社はインターネット上の暗号化の新しいベースラインであるポスト量子暗号について議論してきました。昨年のバースデーウィークの間、Kyberのベータ版がテスト用に利用可能であること、そしてCloudflare Tunnelがポスト量子暗号を使用して、有効にできることを発表しました。今年初め、当社は、この基礎的なテクノロジーを誰もが永久に無料で利用できるべきだというスタンスを明確にしました。

今日、当社は6年を経てマイルストーンを達成し、31件のブログ記事を作成中です。以下に、より詳細に説明されているように、お客様、サービス、および内部システムへのポスト量子暗号サポートの一般提供のロールアウトを開始しています。これには、オリジン接続用のPingora、1.1.1.1、R2、Argo Smart Routing、Snippetsなどの製品が含まれます。

これはインターネットのマイルストーンです。量子コンピュータが今日の暗号を破るのに十分な規模を持つかは、まだ分かりませんが、今すぐポスト量子暗号にアップグレードするメリットは明らかです。Cloudflare、Google、Mozilla、米国国立標準技術研究所、インターネットエンジニアリングタスクフォース、および多数の学術機関による進歩により、高速接続と、将来も保証されるセキュリティはすべて今日可能になっています

一般提供とはどういう意味ですか?2022年10月当社はX25519+KyberをCloudflareを介して提供されるすべてのWebサイトとAPIに対し、ベータ版として有効にしました。しかし、タンゴを踊るには二人必要です。ブラウザがポスト量子暗号もサポートしている場合にのみ、接続が保護されます。2023年8月から、ChromeはデフォルトでX25519+Kyberを徐々に有効にします。

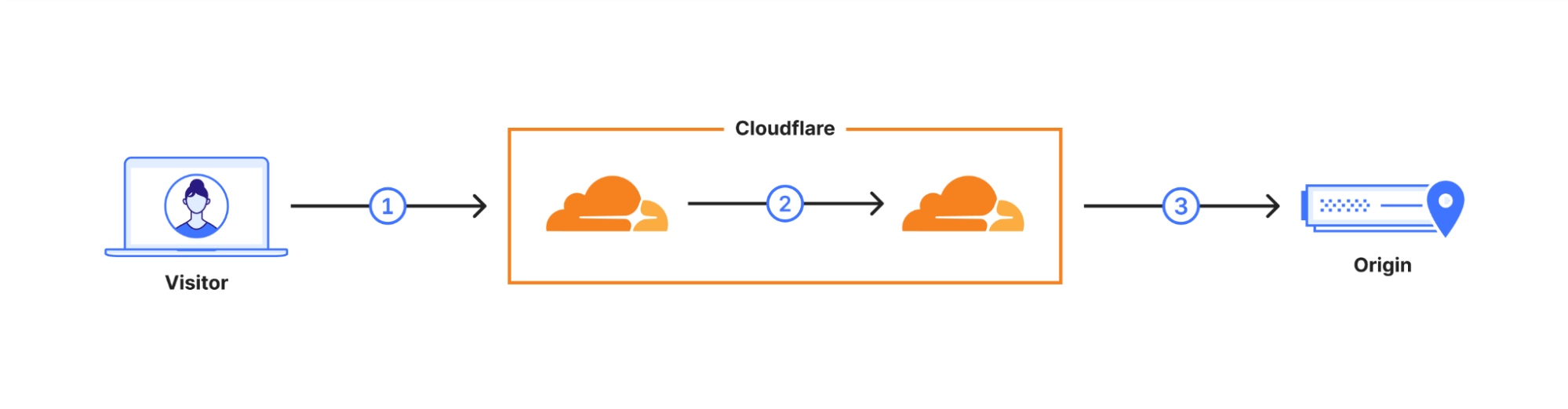

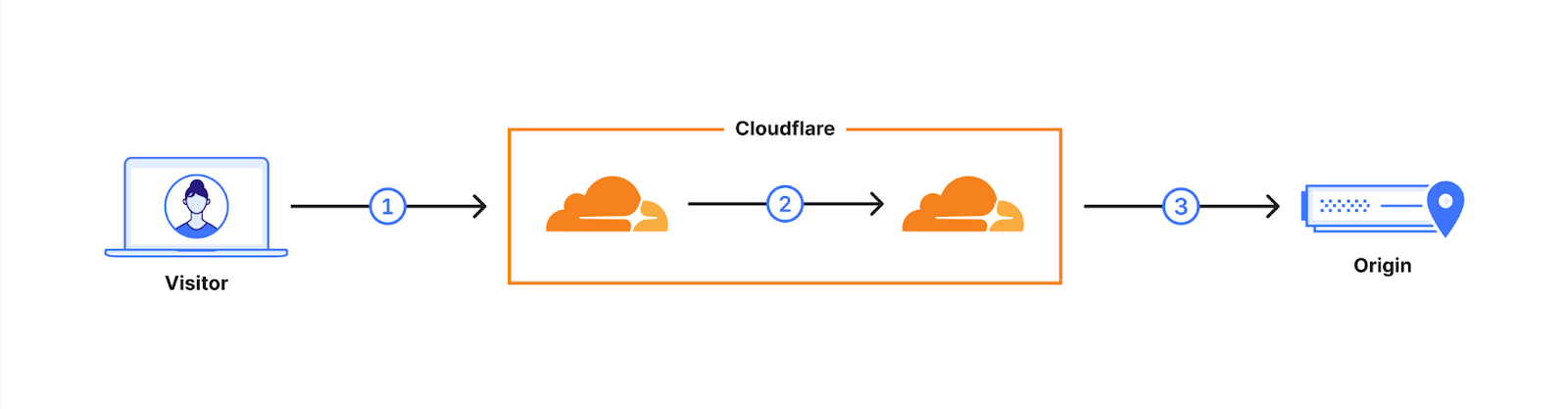

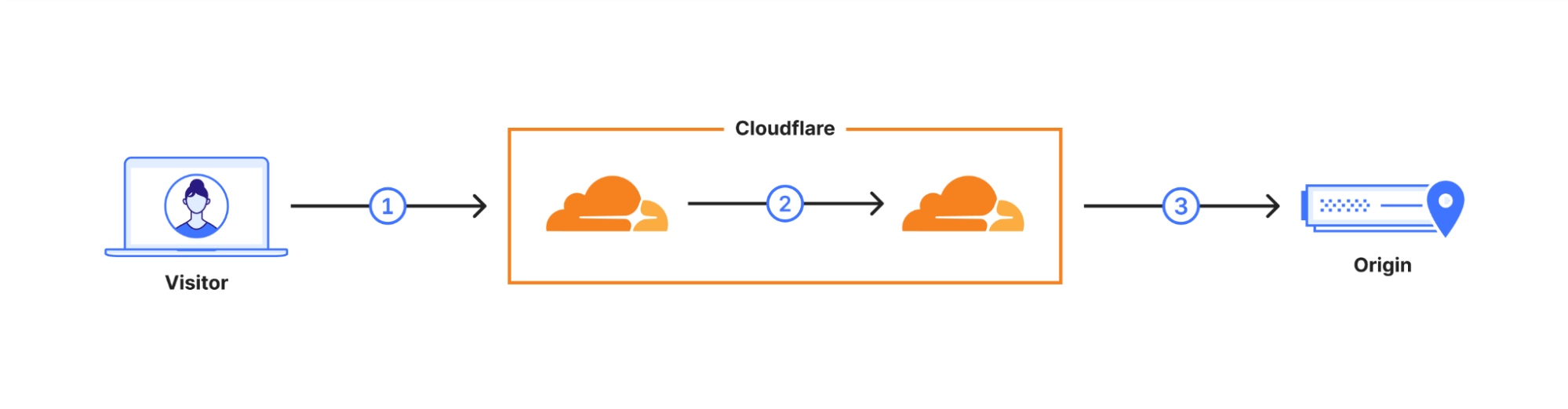

ユーザーのリクエストは、Cloudflareのネットワークを介してルーティングされます(2)。当社はこれらの内部接続の多くをポスト量子暗号を使用するようにアップグレードしており、2024年末までにすべての内部接続のアップグレードが完了する予定です。これにより、最終リンクとして、当社と配信元サーバー間の接続(3)が残ります。

配信元サーバーやCloudflare Workersのフェッチを含む利用に対し、ほとんどのインバウンドおよびアウトバウンド接続向けX25519+Kyberのサポートを使用の一般提供としてロールアウトしていることをお知らせできて嬉しく思います。

| プラン | ポスト量子アウトバウンド接続のサポート |

|---|---|

| Free | ロールアウトを開始。 10月末までに100%を目指す。 |

| ProプランおよびBusinessプラン | 年末までに100%を目指す。 |

| Enterprise | ロールアウト開始は2024年2月。 2024年3月までに100%。 |

Enterpriseのお客様には、今後6ヶ月にわたり、ロールアウトの準備に役立つ追加情報を定期的にお送りする予定です。Pro、Business、Enterpriseをご利用のお客様は、ロールアウトをスキップして、現在ご利用のゾーン内でオプトインすることも、コンパニオンブログ記事で説明されているAPIを使用して事前にオプトアウトすることもできます。2024年2月にEnterprise向けにロールアウトする前に、ダッシュボードにオプトアウト用のトグルを追加する予定です。

今すぐ始めたいという方は、技術的な詳細を記載したブログをチェックし、APIを介したポスト量子暗号サポートを有効にしてください!

何が含まれ、次に何があるのでしょうか?

この規模のアップグレードでは、まず最もよく使用される製品に焦点を当て、次に外側に拡張しエッジケースを把握したいと考えました。 このプロセスにより、このロールアウトには以下の製品とシステムを含めることになりました。

| 1.1.1.1 |

| AMP |

| API Gateway |

| Argo Smart Routing |

| Auto Minify |

| プラットフォームの自動最適化 |

| Automatic Signed Exchanges |

| Cloudflareエグレス |

| Cloudflare Images |

| Cloudflareルールセット |

| Cloudflare Snippets |

| Cloudflare Tunnel |

| カスタムエラーページ |

| フローベースのモニタリング |

| ヘルスチェック |

| ヘルメス |

| ホストヘッドチェッカー |

| Magic Firewall |

| Magic Network Monitoring |

| ネットワークエラーログ |

| プロジェクトフレーム |

| クイックシルバー |

| R2ストレージ |

| リクエストトレーサー |

| Rocket Loader |

| Cloudflare Dashの速度 |

| SSL/TLS |

| Traffic Manager(トラフィックマネージャー) |

| WAF、管理ルール |

| Waiting Room |

| Web Analytics |

ご利用の製品またはサービスがここに記載されていない場合は、まだポスト量子暗号の展開を開始していません。当社は、Zero Trust製品を含むすべての製品とサービスにポスト量子暗号を展開することに積極的に取り組んでいます。 すべてのシステムでポスト量子暗号のサポートが完了するまで、どの製品にポスト量子暗号をロールアウトしたか、次にロールアウトする製品、まだ、近々予定の製品を網羅する更新ブログをイノベーションウィークごとに公開する予定です。

ポスト量子暗号サポートを導入しようと取り組んでいる製品はまもなくです。

| Cloudflare Gateway |

| Cloudflare DNS |

| Cloudflareロードバランサー |

| Cloudflare Access |

| Always Online |

| Zaraz |

| ログ |

| D1 |

| Cloudflare Workers |

| Cloudflare WARP |

| ボット管理 |

なぜ、今なのでしょうか?

今年初めにお知らせしたように、ポスト量子暗号は、それをサポートできるすべてのCloudflare製品とサービスの中に無料で含まれます。最高の暗号化技術は誰もがアクセスできる必要があり、プライバシーと人権を世界的にサポートするのに役立ちます。

3月に述べたように:

「かつては実験的なフロンティアだったものが、現代社会の根底にある構造へと変わりました。これは、電力システム、病院、空港、銀行などの最も重要なインフラストラクチャで実行されます。私たちは最も貴重なメモリをそれに託しています。私たちは機密をそれに託しています。インターネットが、デフォルトでプライベートである必要があるのはそのためです。デフォルトで安全である必要があります。」

ポスト量子暗号に関する私たちの研究は、従来の暗号を破ることができる量子コンピューターが西暦2000年のバグと同様の問題を引き起こすという論文によって推進されています。将来的には、ユーザー、企業、さらには国家に壊滅的な結果をもたらす可能性のある問題が発生することは、わかっています。今回の違いは、計算パラダイムのこの破壊がいつどのように発生するかがわからないことです。 さらに悪いことに、今日捕捉されたトラフィックは将来解読される可能性があります。この脅威に備えるために、今日から準備をする必要があります。

当社は、ポスト量子暗号を皆さんのシステムに導入することを楽しみにしています。ポスト量子暗号の導入とサードパーティのクライアント/サーバーサポートの最新情報をフォローするには、pq.cloudflareresearch.comをチェックして、このブログに注目してください。

1当社は、ポスト量子鍵合意に関して、NISの選択であるKyberの暫定版を使用しています。Kyberはまだ完成していません。最終基準は、2024年にML-KEMという名前で公開される予定であり、X25519Kyber768Draft00のサポートを廃止しつつ、速やかに採用する予定です。

Encrypted Client Hello – the last puzzle piece to privacy

Today we are excited to announce a contribution to improving privacy for everyone on the Internet. Encrypted Client Hello, a new proposed standard that prevents networks from snooping on which websites a user is visiting, is now available on all Cloudflare plans.

Encrypted Client Hello (ECH) is a successor to ESNI and masks the Server Name Indication (SNI) that is used to negotiate a TLS handshake. This means that whenever a user visits a website on Cloudflare that has ECH enabled, no one except for the user, Cloudflare, and the website owner will be able to determine which website was visited. Cloudflare is a big proponent of privacy for everyone and is excited about the prospects of bringing this technology to life.

Browsing the Internet and your privacy

Whenever you visit a website, your browser sends a request to a web server. The web server responds with content and the website starts loading in your browser. Way back in the early days of the Internet this happened in 'plain text', meaning that your browser would just send bits across the network that everyone could read: the corporate network you may be browsing from, the Internet Service Provider that offers Continue reading

Cloudflare now uses post-quantum cryptography to talk to your origin server

Quantum computers pose a serious threat to security and privacy of the Internet: encrypted communication intercepted today can be decrypted in the future by a sufficiently advanced quantum computer. To counter this store-now/decrypt-later threat, cryptographers have been hard at work over the last decades proposing and vetting post-quantum cryptography (PQC), cryptography that’s designed to withstand attacks of quantum computers. After a six-year public competition, in July 2022, the US National Institute of Standards and Technology (NIST), known for standardizing AES and SHA, announced Kyber as their pick for post-quantum key agreement. Now the baton has been handed to Industry to deploy post-quantum key agreement to protect today’s communications from the threat of future decryption by a quantum computer.

Cloudflare operates as a reverse proxy between clients (“visitors”) and customers’ web servers (“origins”), so that we can protect origin sites from attacks and improve site performance. In this post we explain how we secure the connection from Cloudflare to origin servers. To put that in context, let’s have a look at the connection involved when visiting an uncached page on a website served through Cloudflare.

The first connection is from the visitor’s browser to Cloudflare. In October 2022, we enabled X25519+Kyber Continue reading

Privacy-preserving measurement and machine learning

In 2023, data-driven approaches to making decisions are the norm. We use data for everything from analyzing x-rays to translating thousands of languages to directing autonomous cars. However, when it comes to building these systems, the conventional approach has been to collect as much data as possible, and worry about privacy as an afterthought.

The problem is, data can be sensitive and used to identify individuals – even when explicit identifiers are removed or noise is added.

Cloudflare Research has been interested in exploring different approaches to this question: is there a truly private way to perform data collection, especially for some of the most sensitive (but incredibly useful!) technology?

Some of the use cases we’re thinking about include: training federated machine learning models for predictive keyboards without collecting every user’s keystrokes; performing a census without storing data about individuals’ responses; providing healthcare authorities with data about COVID-19 exposures without tracking peoples’ locations en masse; figuring out the most common errors browsers are experiencing without reporting which websites are visiting.

It’s with those use cases in mind that we’ve been participating in the Privacy Preserving Measurement working group at the IETF whose goal is to develop systems Continue reading

Network performance update: Birthday Week 2023

We constantly measure our own network’s performance against other networks, look for ways to improve our performance compared to them, and share the results of our efforts. Since June 2021, we’ve been sharing benchmarking results we’ve run against other networks to see how we compare.

In this post we are going to share the most recent updates since our last post in June, and tell you about our tools and processes that we use to monitor and improve our network performance.

How we stack up

Since June 2021, we’ve been taking a close look at every single network and taking actions for the specific networks where we have some room for improvement. Cloudflare was already the fastest provider for most of the networks around the world (we define a network as country and AS number pair). Taking a closer look at the numbers; in July 2022, Cloudflare was ranked #1 in 33% of the networks and was within 2 ms (95th percentile TCP Connection Time) or 5% of the #1 provider for 8% of the networks that we measured. For reference, our closest competitor on that front was the fastest for 20% of networks.

As of August 30, Continue reading

See what threats are lurking in your Office 365 with Cloudflare Email Retro Scan

We are now announcing the ability for Cloudflare customers to scan old messages within their Office 365 Inboxes for threats. This Retro Scan will let you look back seven days and see what threats your current email security tool has missed.

Why run a Retro Scan

Speaking with customers, we often hear that they do not know the condition of their organization’s mailboxes. Organizations have an email security tool or use Microsoft’s built-in protection but do not understand how effective their current solution is. We find that these tools often let malicious emails through their filters increasing the risk of compromise within the company.

In our pursuit to help build a better Internet, we are enabling Cloudflare customers to use Retro Scan to scan messages within their inboxes using our advanced machine learning models for free. Our Retro Scan will detect and highlight any threats we find so that customers can clean up their inboxes by addressing them within their email accounts. With this information, customers can also implement additional controls, such as using Cloudflare or their preferred solution, to prevent similar threats from reaching their mailbox in the future.

Running a Retro Scan

Customers can navigate to the Cloudflare Continue reading

Detecting zero-days before zero-day

We are constantly researching ways to improve our products. For the Web Application Firewall (WAF), the goal is simple: keep customer web applications safe by building the best solution available on the market.

In this blog post we talk about our approach and ongoing research into detecting novel web attack vectors in our WAF before they are seen by a security researcher. If you are interested in learning about our secret sauce, read on.

This post is the written form of a presentation first delivered at Black Hat USA 2023.

The value of a WAF

Many companies offer web application firewalls and application security products with a total addressable market forecasted to increase for the foreseeable future.

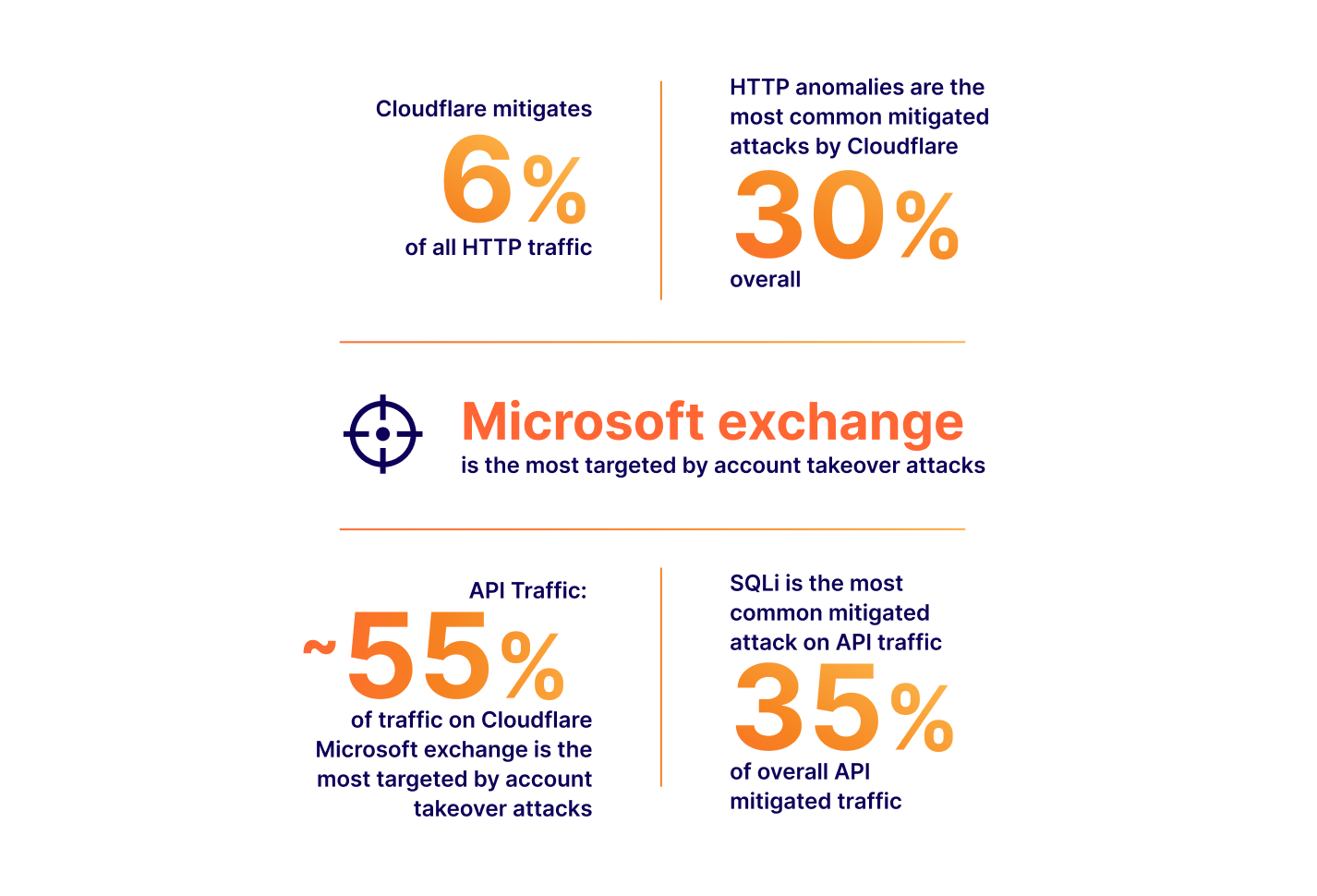

In this space, vendors, including ourselves, often like to boast the importance of their solution by presenting ever-growing statistics around threats to web applications. Bigger numbers and scarier stats are great ways to justify expensive investments in web security. Taking a few examples from our very own application security report research (see our latest report here):

The numbers above all translate to real value: yes, a large portion of Internet HTTP traffic is malicious, therefore you could mitigate a non-negligible amount Continue reading

Post-quantum cryptography goes GA

Over the last twelve months, we have been talking about the new baseline of encryption on the Internet: post-quantum cryptography. During Birthday Week last year we announced that our beta of Kyber was available for testing, and that Cloudflare Tunnel could be enabled with post-quantum cryptography. Earlier this year, we made our stance clear that this foundational technology should be available to everyone for free, forever.

Today, we have hit a milestone after six years and 31 blog posts in the making: we’re starting to roll out General Availability1 of post-quantum cryptography support to our customers, services, and internal systems as described more fully below. This includes products like Pingora for origin connectivity, 1.1.1.1, R2, Argo Smart Routing, Snippets, and so many more.

This is a milestone for the Internet. We don't yet know when quantum computers will have enough scale to break today's cryptography, but the benefits of upgrading to post-quantum cryptography now are clear. Fast connections and future-proofed security are all possible today because of the advances made by Cloudflare, Google, Mozilla, the National Institutes of Standards and Technology in the United States, the Internet Engineering Task Force, and numerous academic institutions

What does Continue reading

Easily manage AI crawlers with our new bot categories

Today, we’re excited to announce that any Cloudflare user, on any plan, can choose specific categories of bots that they want to allow or block, including AI crawlers.

As the popularity of generative AI has grown, content creators and policymakers around the world have started to ask questions about what data AI companies are using to train their models without permission. As with all new innovative technologies, laws will likely need to evolve to address different parties' interests and what’s best for society at large. While we don’t know how it will shake out, we believe that website operators should have an easy way to block unwanted AI crawlers and to also let AI bots know when they are permitted to crawl their websites.

The good news is that Cloudflare already automatically stops scraper bots today. But we want to make it even easier for customers to be sure they are protected, see how frequently AI scrapers might be visiting their sites, and respond to them in more targeted ways. We also recognize that not all AI crawlers are the same and that some AI companies are looking for clear instructions for when they should not crawl a public website.

Continue reading

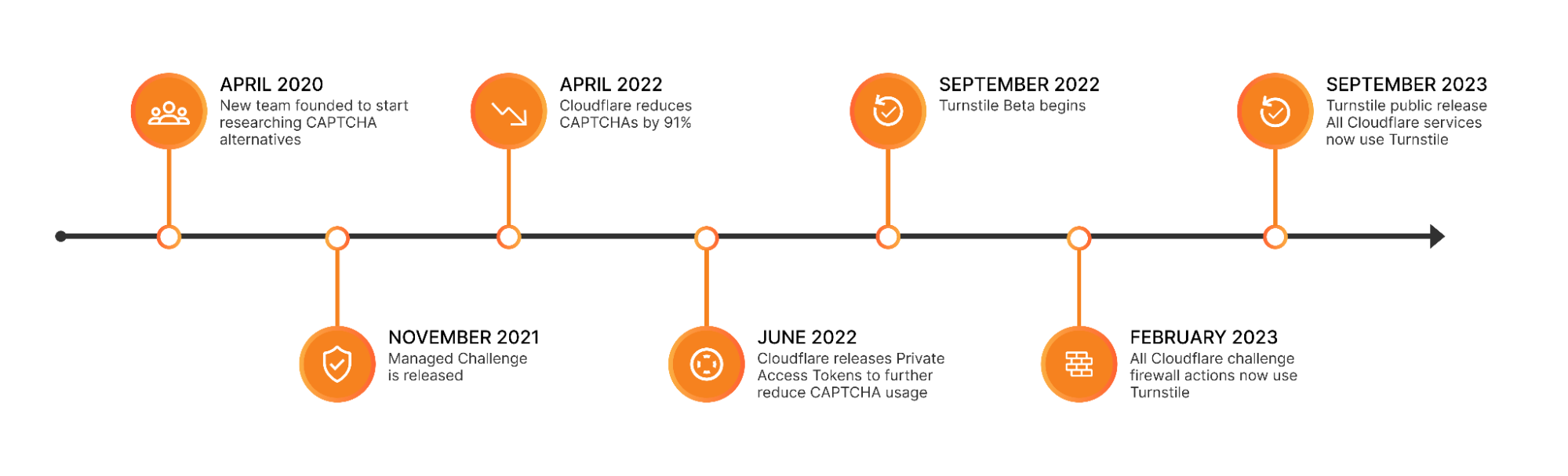

Cloudflare is free of CAPTCHAs; Turnstile is free for everyone

For years, we’ve written that CAPTCHAs drive us crazy. Humans give up on CAPTCHA puzzles approximately 15% of the time and, maddeningly, CAPTCHAs are significantly easier for bots to solve than they are for humans. We’ve spent the past three and a half years working to build a better experience for humans that’s just as effective at stopping bots. As of this month, we’ve finished replacing every CAPTCHA issued by Cloudflare with Turnstile, our new CAPTCHA replacement (pictured below). Cloudflare will never issue another visual puzzle to anyone, for any reason.

Now that we’ve eliminated CAPTCHAs at Cloudflare, we want to make it easy for anyone to do the same, even if they don’t use other Cloudflare services. We’ve decoupled Turnstile from our platform so that any website operator on any platform can use it just by adding a few lines of code. We’re thrilled to announce that Turnstile is now generally available, and Turnstile’s ‘Managed’ mode is now completely free to everyone for unlimited use.

Easy on humans, hard on bots, private for everyone

There’s a lot that goes into Turnstile’s simple checkbox to ensure that it’s easy for everyone, preserves user privacy, and does its job stopping bots. Continue reading

클라우드, 네트워크, 애플리케이션 및 사용자를 연결하고 보호하는 현대적인 방법인 클라우드 연결성으로 여러분을 모십니다

우리가 근무하면서 가장 좋아하는 부분은 Cloudflare 고객과 대화하는 시간입니다. 고객의 IT 및 보안 문제에 대하여 항상 새롭고 흥미로운 사실을 알게 됩니다.

최근 이러한 대화에 변화가 있었습니다. 고객이 언급하는 가장 큰 문제를 쉽게 정의할 수 없는 경우가 점점 더 많아집니다. 그리고 이러한 문제는 개별 제품이나 주요 기능으로 대처할 수 없는 것입니다.

더 정확히 말하면 IT 및 보안 팀에서는 디지털 환경에 대한 제어 능력을 잃고 있다고 이야기합니다.

제어 능력 상실은 다양한 형태를 띱니다. 고객은 호환성이 걱정되므로 필요성은 알지만 새로운 기능을 채택하는 것을 꺼리는 모습을 보일 수 있습니다. 아니면 비교적 단순한 변경 사항을 적용하는 데 시간과 노력이 많이 들며, 이러한 변경 사항 때문에 더 큰 영향력이 있을 작업에 투입할 시간을 빼앗기고 있다고 언급할 수도 있습니다. 고객이 느끼는 감정을 요약하자면 "팀이나 예산의 규모가 아무리 크더라도 비즈니스를 완벽하게 연결하고 보호하는 데는 절대 충분하지 않다"는 것입니다.

익숙하게 느껴지는 부분이 있으신가요? 그런 부분이 있다 해도 여러분 혼자 그렇게 느끼는 건 아닙니다.

제어 능력을 상실하는 이유

IT 및 보안이 바뀌는 속도는 빨라지고 있으며 무섭도록 복잡해지고 있습니다. IT 및 보안 팀에서는 과거에 비해 더 다양한 기술 도메인을 책임지고 있습니다. 최근 Forrester 연구에서 확인된 변화에 따르면 사내, 원격, 하이브리드 근무자를 보호할 책임이 있는 팀의 52%만이 지난 5년 동안 이러한 책임을 맡았습니다. 한편 46%는 해당 기간 동안 퍼블릭 클라우드 애플리케이션을 Continue reading

Descubre qué amenazas acechan tu buzón de correo de Office 365 con Cloudflare Email Retro Scan

Ahora los clientes de Cloudflare pueden analizar viejos mensajes de sus bandejas de entrada de Office 365 en busca de amenazas. Retro Scan te permitirá observar qué amenazas ha pasado por alto tu actual herramienta de seguridad del correo electrónico en los últimos siete días.

Por qué ejecutar Retro Scan

Al hablar con los clientes, solemos escuchar que no conocen el estado de los buzones de correo de sus organizaciones. Las organizaciones tienen una herramienta de seguridad para correo electrónico o usan la protección integrada de Microsoft, pero no entienden qué nivel de efectividad tiene la actual solución. A menudo, descubrimos que estas herramientas permiten el paso de correos electrónicos maliciosos a través de sus filtros, lo que aumenta el riesgo en la empresa.

En nuestra búsqueda de ayudar a crear un mejor servicio de Internet, permitimos a los clientes de Cloudflare el uso de Retro Scan para analizar mensajes en sus buzones de entrada con nuestros modelos de aprendizaje automático avanzados, ¡gratis! Nuestro Retro Scan detecta y resalta las amenazas que encontramos para que los clientes puedan limpiar sus buzones de entrada y gestionarlas dentro de sus cuentas de correo electrónico. Con esta información, los clientes también pueden implementar Continue reading

Veja quais ameaças estão escondidas no seu Office 365 com o Cloudflare Email Retro Scan

Agora anunciamos a possibilidade de os clientes da Cloudflare verificarem mensagens antigas em suas caixas de entrada do Office 365 em busca de ameaças. Este Retro Scan permitirá que você analise sete dias atrás e veja quais ameaças sua ferramenta de segurança de e-mail atual deixou passar.

Por que executar um Retro Scan

Conversando com os clientes, ouvimos frequentemente que eles não sabem o estado das caixas de entrada de suas organizações. As organizações possuem uma ferramenta de segurança de e-mail ou usam a proteção integrada da Microsoft, mas não entendem a eficácia de sua solução atual. Descobrimos que essas ferramentas muitas vezes permitem que e-mails maliciosos passem por seus filtros, aumentando o risco de comprometimento dentro da empresa.

Em nossa busca para ajudar a construir uma internet melhor, disponibilizamos para os clientes da Cloudflare o uso do Retro Scan para verificar mensagens em suas caixas de entrada usando nossos modelos avançados de aprendizado de máquina gratuitamente. Nosso Retro Scan detecta e destaca quaisquer ameaças que encontrarmos, assim os clientes podem limpar suas caixas de entrada tratando-as em suas contas de e-mail. Com essas informações, os clientes também podem implementar controles adicionais, como usar a Cloudflare ou sua solução preferida, Continue reading

Sehen Sie, welche Bedrohungen in Ihrem Office 365 lauern – mit dem Retro Scan von Cloudflare für E-Mails

Ab sofort können Cloudflare-Kunden alte Nachrichten in ihren Office 365-Postfächern auf Bedrohungen hin scannen. Mit dem Retro Scan können Sie jeweils die vergangenen sieben Tage überprüfen, um zu sehen, welche Bedrohungen Ihrem aktuellen E-Mail-Sicherheitstool entgangen sind.

Gründe für den Einsatz eines Retro Scan

Kunden berichten uns oft, dass sie nicht wissen, in welchem Zustand die E-Mail-Postfächer ihrer Unternehmen sind. Firmen nutzen ein E-Mail-Sicherheitstool oder den bei Microsoft integrierten Schutz. Oft ist wissen sie aber nicht, wie effektiv ihre aktuelle Lösung tatsächlich arbeitet. Wir haben festgestellt, dass schädliche E-Mails von diesen Werkzeugen oft nicht herausgefiltert werden, wodurch sich das Risiko einer Kompromittierung innerhalb des Unternehmens erhöht.

Im Rahmen unserer Bemühungen, ein besseres Internet zu schaffen, stellen wir Cloudflare-Kunden nun einen Retro Scan zur Verfügung. Mit diesem können sie Nachrichten in ihren Postfächern unter Einsatz fortschrittlicher Machine Learning-Modelle kostenlos scannen. Unser Retro Scan erkennt Bedrohungen und weist auf diese hin, sodass Kunden ihre Postfächer durch eine Behebung innerhalb ihrer E-Mail-Konten bereinigen können. Mit diesen Informationen sind sie außerdem in der Lage, herkömmliche Kontrollen zu implementieren. Sie können also Cloudflare oder ihre bevorzugte Lösung einsetzen, um vergleichbare Bedrohungen in Zukunft daran zu hindern, ihre Postfach überhaupt erst zu erreichen.

Einsatz des Retro Scan

Découvrez les menaces qui se dissimulent dans votre boîte aux lettres Office 365 avec Cloudflare Email Retro Scan

Nous annonçons maintenant la possibilité pour les clients de Cloudflare d'analyser les anciens messages dans leurs boîtes de réception Office 365 afin de détecter les menaces. Le service Retro Scan vous permet de revenir sept jours en arrière, afin d'identifier les menaces qui n'ont pas été détectées par votre outil de sécurité actuel.

Pourquoi exécuter le service Retro Scan

Lorsque nous échangeons avec nos clients, ces derniers nous apprennent souvent qu'ils n'ont pas connaissance de l'état des boîtes aux lettres de leur entreprise. Les entreprises disposent d'un outil de sécurité des e-mails, ou elles utilisent la protection intégrée de Microsoft, mais elles ne sont pas en mesure de comprendre l'efficacité de leur solution actuelle. Nous constatons que les filtres de ces outils laissent souvent passer des e-mails malveillants, augmentant le risque de compromission de données au sein des entreprises.

Conformément à notre engagement de contribuer à bâtir un Internet meilleur, nous permettons désormais aux clients de Cloudflare d'utiliser Retro Scan pour analyser les messages dans leurs boîtes de réception à l'aide de nos modèles d'apprentissage automatique avancés – et ce, gratuitement. Notre service Retro Scan détectera et mettra en évidence toutes les menaces que nous identifions, afin de permettre aux Continue reading