Cybersecurity, Data Protection, and IoT Events in November & December

The end of the year has been very busy, with Internet Society staff members speaking at many events on data protection, security-by-design, and the Internet of Things (IoT). First, to recap the last month, you might want to read the Rough Guide to IETF 103, especially Steve Olshansky’s Internet of Things post. Dan York also talked about DNSSEC and the Root KSK Rollover at ICANN 63, and there were several staff members involved in security, privacy, and access discussions at the Internet Governance Forum. In addition, we submitted comments on NIST’s white paper on Internet of Things (IoT) Trust Concerns; the NTIA RFC on Developing the Administration’s Approach to Consumer Privacy; and the NIST draft “Considerations for Managing Internet of Things (IoT) Cybersecurity and Privacy Risks”.

We also have several speaking engagements coming up in the next few weeks. Here’s a quick rundown of the events.

6th National Cybersecurity Conference

27-28 November

Mona, Jamaica

The Mona ICT Policy Centre at CARIMAC, University of the West Indies is hosting the 6th National Cyber Security Conference. The Conference theme this year is “Data Protection – Securing Big Data, Understanding Biometrics and Protecting National ID Systems.” Continue reading

Hygiene of Network Automation

David Gee decided to talk about hygiene of network automation in the Spring 2019 Building Network Automation Solutions online course, and (not surprisingly) Christoph Jaggi wanted to know more:

You highlight the hygiene of automation. What is it and why does it matter?

Hygiene is the important but boring bit of automation most beginners and amateurs pass by.

Read more ...BEAT: asynchronous BFT made practical

BEAT: asynchronous BFT made practical Duan et al., CCS’18

Reaching agreement (consensus) is hard enough, doing it in the presence of active adversaries who can tamper with or destroy your communications is much harder still. That’s the world of Byzantine fault tolerance (BFT). We’ve looked at Practical BFT (PBFT) and HoneyBadger on previous editions of The Morning Paper. Today’s paper, BEAT, builds on top of HoneyBadger to offer BFT with even better latency and throughput.

Asynchronous BFT protocols are arguably the most appropriate solutions for building high-assurance and intrusion-tolerant permissioned blockchains in wide-are (WAN) environments, as these asynchronous protocols are inherently more robust against timing and denial-of-service (DoS) attacks that can be mounted over an unprotected network such as the Internet.

The best performing asynchronous BFT protocol, HoneyBadger, still lags behind the partially synchronous PBFT protocol in terms of throughput and latency. BEAT is actually a family of five different asynchronous BFT protocols that start from the HoneyBadger baseline and make improvements targeted at different application scenarios.

Unlike HoneyBadgerBFT, which was designed to optimize throughput only, BEAT aims to be flexible and versatile, providing protocol instances optimized for latency, throughput, bandwidth, or scalability (in terms of the number Continue reading

Working out Backend Knots and Building Routers (Austin Real World Serverless Video Recap)

I work in Developer Relations at Cloudflare and I'm fortunate to have top-notch developers around me who are willing to share their knowledge with the greater developer community. I produced a series of events this autumn called Real World Serverless at multiple locations around the world and I want to share the recorded videos from these events.

Our Austin Real World Serverless event (in partnership with the ATX Serverless User Group Meetup) included two talks about Serverless technology featuring Victoria Bernard and Preston Pham from Cloudflare. They spoke about working out backend knots with Workers and building a router for the great good.

About the talks

Working out Backend Knots with Workers - Victoria Bernard (0:00-15:19)

Cloudflare Workers is a platform the makes serverless development and deployment easier than ever. A worker is a script running between your clients' browsers and your site's origin that can intercept requests. Victoria went over some popular use cases of how proxy workers can dramatically improve a site's performance and add functionality that would normally require toying with complicated back-end services.

Build a Router for Great Good - Preston Pham (15:20-33:53)

Serverless computing is great, but requires routing or some kind of API Continue reading

Weekly Top Posts: 2018-11-25

- Verizon Buys Software-Defined Perimeter Assets From Vidder

- IBM Boosts Cloud Migration, Management

- Citrix Buys Sapho for $200M to Grow Its Digital Workspace Tech

- Microsoft, IBM Top ABI’s Blockchain Platform Provider List

- ZTE Flips the RAN Script on Samsung in Q3, Says Dell’Oro

My Second Cloudflare Company Retreat

Last week, 760 humans from Singapore, London, Beijing, Sydney, Nairobi, Austin, New York, Miami, Washington DC, Warsaw, Munich, Brussels, and Champaign reunited with their San Francisco counterparts for our 9th annual Cloudflare company retreat in the San Francisco Bay Area. The purpose of the company retreat is to bring all global employees together under one roof to bond, build bridges, have fun, and learn – all in support of Cloudflare’s mission to help build a better Internet.

It’s easy to write off corporate retreats as an obligatory series of meetings and tired speeches, but Cloudflare’s retreats are uniquely engaging, personalized, fun, and inspiring. Having grown with Cloudflare over the last year (I started just before our 2017 retreat), I wanted to share some of my experiences to highlight Cloudflare’s incredible culture.

The office was buzzing with different languages and laughter as people hugged and shook hands for the first time after working online together for a year or more. Everyone’s Google calendar looked like a rainbow as we each mined for white space to squeeze in those coveted 1:1s, all-hands presentations, and bowling offsites with our global colleagues. The buses and Google chats felt like summer camp, with people claiming Continue reading

Stuff The Internet Says On Scalability For November 23rd, 2018

Wake up! It's HighScalability time:

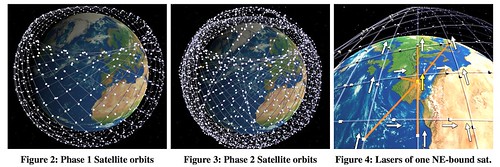

Curious how SpaceX's satellite constellation works? Here's some fancy FCC reverse engineering magic. (Delay is Not an Option: Low Latency Routing in Space, Murat)

Do you like this sort of Stuff? Please support me on Patreon. I'd really appreciate it. Know anyone looking for a simple book explaining the cloud? Then please recommend my well reviewed (30 reviews on Amazon and 72 on Goodreads!) book: Explain the Cloud Like I'm 10. They'll love it and you'll be their hero forever.

- 5: ios 12 jailbreaks; ~43 million: dataset of atomic wikipedia edits; 1.88 exaops: peak throughput Summit supercomputer; 2 million: ESPN subscribers lost to cord cutters; 302: neurons show simple behavior of learning and memory; 224: seconds to train a ResNet-50 on ImageNet to an accuracy of approximately 75%; 120 milliseconds: space shuttle tolerances; $1 billion: Airbnb revenue; 900+ mph: tip of a whip;

- Quotable Quotes:

- @JeffDean: This thread is important. Bringing the best and brightest students from around the world to further their studies in the U.S. has been a key to success in many technological Continue reading

How and Why to Give 4G the 5G Treatment

MEC is an idea whose time has come. And for mobile operators, advertisers, and OTT providers, it's also an idea that can't come soon enough.

MEC is an idea whose time has come. And for mobile operators, advertisers, and OTT providers, it's also an idea that can't come soon enough.

Attitude and Gratitude

I don’t often let my studies in philosophy and worldview creep into these pages intentionally. I don’t think it can be helped, of course, because the more I study philosophy, the more I see just how practical it is (contrary to popular belief). On the other hand, sometimes an observation about our world jumps out at me so strongly that I cannot help but to post about it here. If you don’t want to hear this, I give you permission to stop reading now.

Today, in the U.S., is what is called “Black Friday.” The name derives from a major stock market crash in the 1850’s, but was eventually applied to the combined shopping and football crowds the day after Thanksgiving by the Philadelphia Police, and now, finally to the general shopping day after Thanksgiving in the U.S.

Thanksgiving is all about giving thanks. About gathering family and friends, and appreciating community, and people, and the shared blessings of homes and meals together. It is interesting that Thanksgiving and Black Friday are juxtaposed in just this way. The family right up against the commercial, the quietness of the home against the loudness of the market. Maybe Continue reading

5 Ways to Improve Video and Real-time Enterprise Apps

By taking a more proactive approach to mobile worker connectivity, enterprises can not only significantly improve productivity but also decrease company support costs.

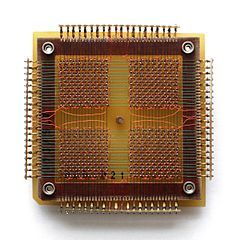

Every 7.8μs your computer’s memory has a hiccup

Modern DDR3 SDRAM. Source: BY-SA/4.0 by Kjerish

During my recent visit to the Computer History Museum in Mountain View, I found myself staring at some ancient magnetic core memory.

Source: BY-SA/3.0 by Konstantin Lanzet

I promptly concluded I had absolutely no idea on how these things could ever work. I wondered if the rings rotate (they don't), and why each ring has three wires woven through it (and I still don’t understand exactly how these work). More importantly, I realized I have very little understanding on how the modern computer memory - dynamic RAM - works!

Source: Ulrich Drepper's series about memory

I was particularly interested in one of the consequences of how dynamic RAM works. You see, each bit of data is stored by the charge (or lack of it) on a tiny capacitor within the RAM chip. But these capacitors gradually lose their charge over time. To avoid losing the stored data, they must regularly get refreshed to restore the charge (if present) to its original level. This refresh process involves reading the value of every bit and then writing it back. During this "refresh" time, the memory is busy and it can't perform normal operations Continue reading

Applying Machine Learning At The Front End Of HPC

IBM and the other vendors who are bidding on the CORAL2 systems for the US Department of Energy can’t talk about those bids, which are in flight, and Big Blue and its partners in building the “Summit” supercomputer at Oak Ridge National Laboratory and “Sierra” at Lawrence Livermore National Laboratory – that would be Nvidia for GPUs and Mellanox Technologies for InfiniBand interconnect – are all about publicly focusing on the present, since these two machines are at the top of the flops charts now. …

Applying Machine Learning At The Front End Of HPC was written by Timothy Prickett Morgan at .

Dell EMC Gets Serious About HPC – Again

Over the year, Dell EMC has always had a hand in HPC and supercomputing. …

Dell EMC Gets Serious About HPC – Again was written by Jeffrey Burt at .

Weekly Show 417: Meeting 5G Demands With Cisco’s 5G xHaul Transport (Sponsored)

In today’s sponsored podcast we discuss the new demands that 5G will put on service providers, and how Cisco’s 5G xHaul Transport solution can meet those demands.

The post Weekly Show 417: Meeting 5G Demands With Cisco’s 5G xHaul Transport (Sponsored) appeared first on Packet Pushers.

Serverless Progressive Web Apps using React with Cloudflare Workers

Let me tell you the story of how I learned that you can build Progressive Web Apps on Cloudflare’s network around the globe with one JavaScript bundle that runs both in the browser and on Cloudflare Workers with no modification and no separate bundling for client and server. And when registered as a Service Worker, the same JavaScript bundle will turn your page into a Progressive Web App that doesn’t even make network requests. Here's how that works...

"Any resemblance to actual startups, living or IPO'd, is purely coincidental and unintended" - @sevki

A (possibly apocryphal) Story

I recently met up with some old friends in London who told me they were starting a new business. They did what every coder would do... they quickly hacked something together, bought a domain, and registered the GitHub org and thus Buzzwords was born.

The idea was simple: you could feed the name of your application into a machine learning model and it would generate the configuration files for your deployment for various container orchestrators. They achieved this by going through millions of deployment configurations and training a linear regression model by gamifying quantum computing because blockchain, or something (I told you this Continue reading

Black Friday Blowout: Hot Tech Picks for 2019

‘Tis the season… for the best shopping deals! What’s on tech pros’ must-have technology list for 2019?

From Excel to Network Infrastructure as Code with Carl Buchmann

After a series of forward-looking podcast episodes we returned to real life and talked with Carl Buchmann about his network automation journey, from managing upgrades with Excel and using Excel as the configuration consistency tool to network-infrastructure-as-code concepts he described in a guest blog post in February 2018

Read more ...