0

Review: Hot new tools to fight insider threats

In the 1979 film When a Stranger Calls, the horror is provided when police tell a young babysitter that the harassing phone calls she has been receiving are coming from inside the house. It was terrifying for viewers because the intruder had already gotten inside, and was presumably free to wreak whatever havoc he wanted, unimpeded by locked doors or other perimeter defenses. In 2016, that same level of fear is being rightfully felt towards a similar danger in cybersecurity: the insider threat.To read this article in full or to leave a comment, please click here(Insider Story)

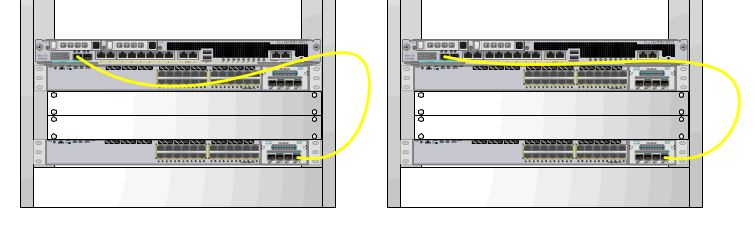

The image on the right ticks the box for me. There’s no room for a dedicated 1RU horizontal cable manager, but there is room for a zero-RU strain relief bar (as seen below). The result is a relatively neat cabling job. It’s no work of art, but it’s functional.

The image on the right ticks the box for me. There’s no room for a dedicated 1RU horizontal cable manager, but there is room for a zero-RU strain relief bar (as seen below). The result is a relatively neat cabling job. It’s no work of art, but it’s functional. A strain-relief bar is a cheap metal bar that you can bolt on when you rack-mount your switch. It allows you to velcro your fiber patches to the bar, taking the strain to help prevent breaks and preventing the dreaded cable droop. You should, of course, take care to ensure you don’t block access to any field-replaceable units, cards or ports on your network device.

A strain-relief bar is a cheap metal bar that you can bolt on when you rack-mount your switch. It allows you to velcro your fiber patches to the bar, taking the strain to help prevent breaks and preventing the dreaded cable droop. You should, of course, take care to ensure you don’t block access to any field-replaceable units, cards or ports on your network device.