2022 年第二季度 DDoS 攻擊趨勢

歡迎閱讀我們的 2022 年第二季 DDoS 報告。本報告包括有關 DDoS 威脅情勢的深入解析與趨勢,這些資訊從全球 Cloudflare 網路中觀察所得。Radar 上也會提供本報告的互動版本。

第二季度,我們看到了有史以來最大的一些攻擊,包括 Cloudflare 自動偵測並緩解的每秒 2600 萬個請求的 HTTPS DDoS 攻擊。此外,針對烏克蘭和俄羅斯的攻擊仍在繼續,而新的 DDoS 勒索攻擊活動又出現了。

重點內容

俄羅斯和烏克蘭網際網路

- 地面戰爭伴隨著針對資訊傳播的攻擊。

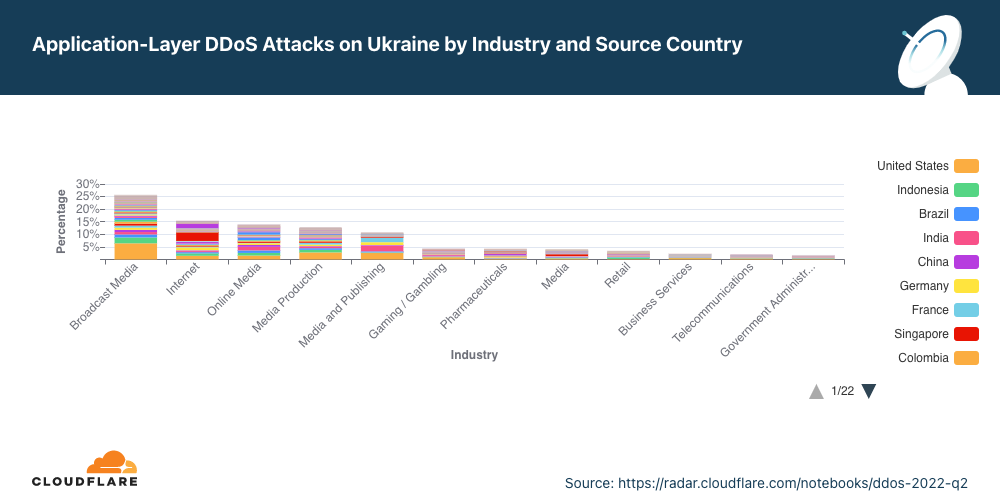

- 烏克蘭的廣播媒體公司成為第二季度 DDoS 攻擊的最大目標。事實上,前六大最受攻擊的產業均為網路/網際網路媒體、出版業和廣播業。

- 另一方面,在俄羅斯,網路媒體曾經是遭受攻擊最多的產業,如今下降到第三位。第二季度,俄羅斯的銀行、金融服務及保險業 (BFSI) 公司成為遭受攻擊最多的公司,躍居首位;BFSI 產業成為幾乎 50% 的應用程式層 DDoS 攻擊的目標。俄羅斯的加密貨幣公司是遭受攻擊第二多的公司。

更一步瞭解 Cloudflare 如何讓開放式網際網路流量流入俄羅斯,同時避免向外展開攻擊。

DDoS 勒索攻擊

- 我們觀察到新一波 DDoS 勒索攻擊,由自稱 Fancy Lazarus 的實體發出。

- 2022 年 6 月,勒索攻擊達到今年以來的最高水準:每五名經歷過 DDoS 攻擊的問卷調查受訪者中,就有一人報告受到 DDoS 勒索攻擊或其他威脅。

- 整體來說,第二季度 DDoS 勒索攻擊環比增長 11%。

應用程式層 DDoS 攻擊

- 2022 年第二季度,應用程式層 DDoS 攻擊同比增長 44%。

- 位於美國的組織是此類攻擊的主要目標,其次是賽普勒斯、香港和中國。針對賽普勒斯組織的攻擊數環比增長 171%。

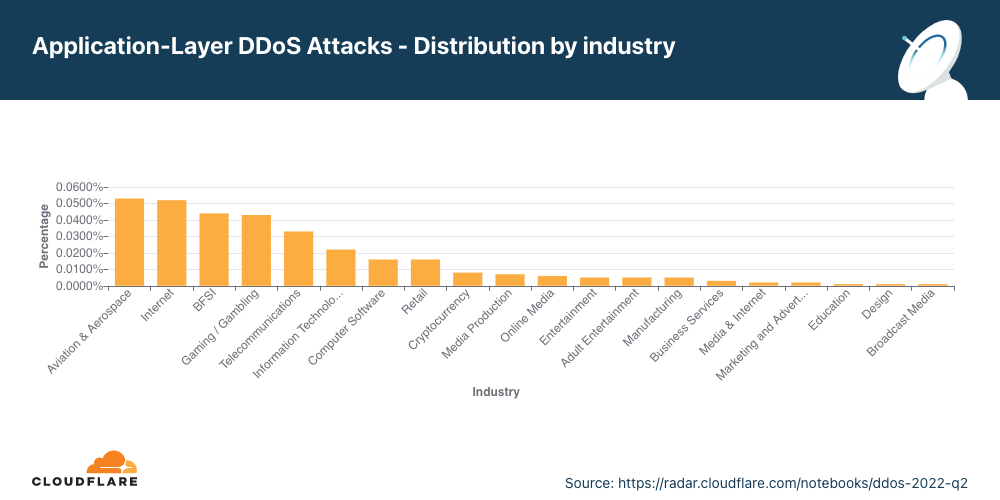

- 第二季度,航空和太空產業遭受此類攻擊最多,其次是網際網路產業、銀行業、金融服務業及保險業,而遊戲/博彩業則位居第四。

網路層 DDoS 攻擊

- 2022 年第二季度,網路層 DDoS 攻擊數同比增長 75%。100 Gbps 及以上的攻擊數環比增長 19%,持續 3 小時以上的攻擊數環比增長 9%。

- 遭受此類攻擊最多的產業分別是電信業、遊戲/博彩以及資訊技術和服務業。

- 美國的組織是此類攻擊的主要目標,其次是新加坡、德國和中國。

本報告基於 Cloudflare 的 DDoS 防護系統自動偵測和緩解的 DDoS 攻擊數。如需深入瞭解該系統的運作方式,請查看此深度剖析部落格貼文。

有關我們如何衡量在網路中觀察到的 DDoS 攻擊的說明

為分析攻擊趨勢,我們會計算「DDoS 活動」率,即攻擊流量在我們的全球網路中、特定位置或特定類別(如行業或帳單國家/地區)觀察到的總流量(攻擊流量+潔淨流量)中所佔的百分比。透過衡量這些百分比,我們能夠標準化資料點並避免以絕對數字反映而出現的偏頗,例如,某個 Cloudflare 資料中心接收到更多的總流量,因而發現更多攻擊。

勒索攻擊

我們的系統會持續分析流量,並在偵測到 DDoS 攻擊時自動套用緩解措施。每個受到 DDoS 攻擊的客戶都會收到提示,請求參與一個自動化調查,以幫助我們更好地瞭解該攻擊的性質以及緩解措施的成功率。

兩年多以來,Cloudflare 一直在對受到攻擊的客戶進行調查,調查中的一個問題是,他們是否收到威脅或勒索信,要求付款以換得停止 DDoS 攻擊。

第二季度報告威脅或勒索信的受訪者數量環比和同比增長 11%。在本季度,我們一直在緩解 DDoS 勒索攻擊,這些攻擊由自稱是進階持續威脅 (APT) 組織「Fancy Lazarus」的實體發起的。金融機構和加密貨幣公司成為這起活動的主要目標。

深入探究第二季度,我們可以看到,在 6 月份,每五名受訪者中就有一人報告收到 DDoS 勒索攻擊或威脅 — 這既是 2022 年報告數量最多的月份,也是自 2021 年 12 月以來報告數量最多的月份。

應用程式層 DDoS 攻擊

應用程式層 DDoS 攻擊,特別是 HTTP DDoS 攻擊,旨在通過使 HTTP 伺服器無法處理合法用戶請求來破壞它。如果伺服器收到的請求數量超過其處理能力,伺服器將丟棄合法請求甚至崩潰,導致對合法使用者的服務效能下降或中斷。

應用程式層 DDoS 攻擊:月份分佈

第二季度,應用程式層 DDoS 攻擊數同比增長 44%。

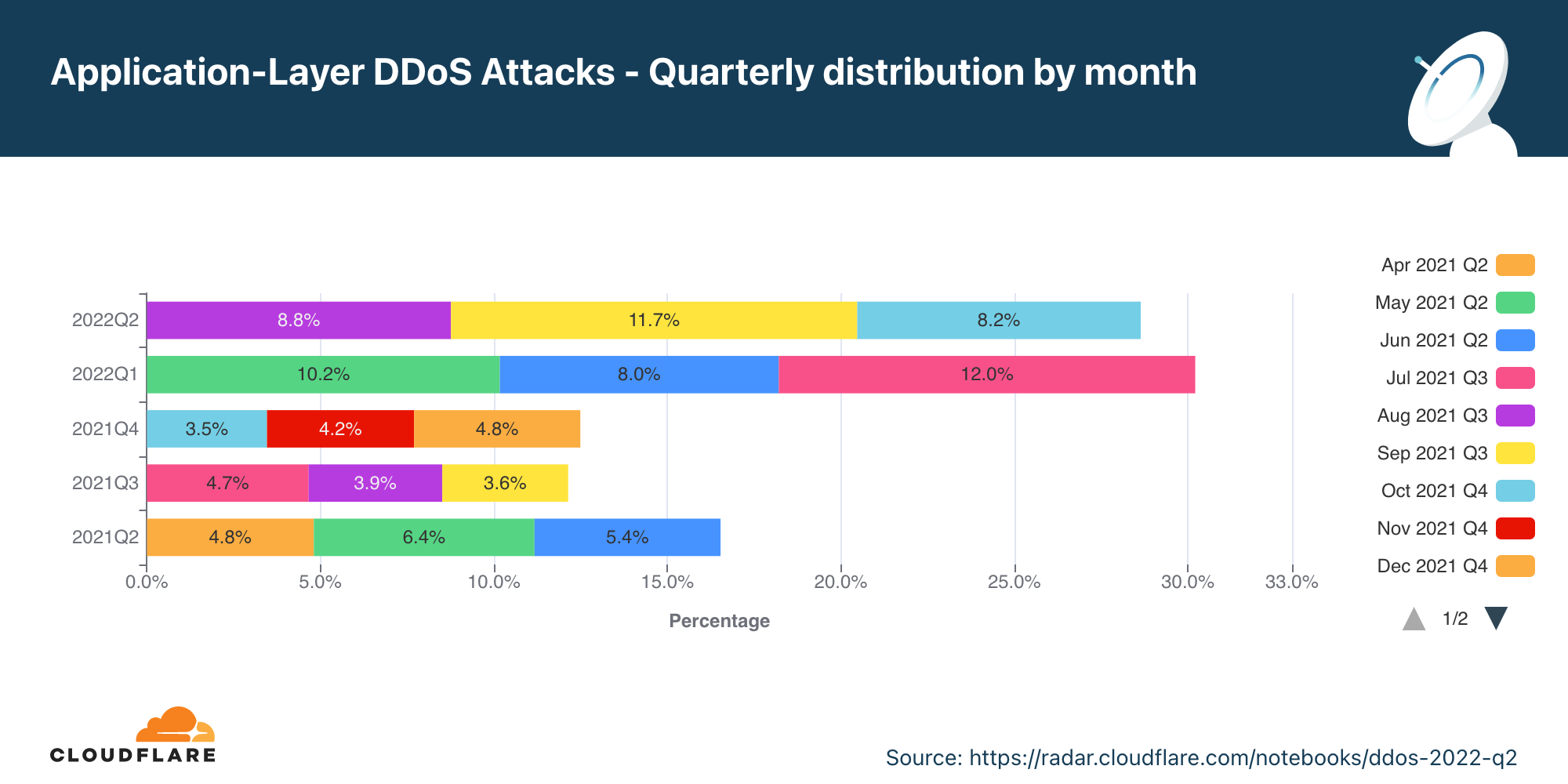

整體來說,在第二季度,應用程式層 DDoS 攻擊數量同比增長 44%,但環比下降 16%。5 月是本季度最繁忙的月份。幾乎 47% 的應用程式層 DDoS 攻擊都發生在 5 月,而 6 月發生的攻擊數最少 (18%)。

應用程式層 DDoS 攻擊:行業分佈

針對航空和太空業的攻擊數環比增長 256%。

第二季度,航空和太空是遭受應用程式層 DDoS 攻擊最多的產業。銀行、金融機構和保險業 (BFSI) 緊隨其後,而遊戲/博彩業則位居第三。

烏克蘭和俄羅斯的網路空間

媒體和出版公司是烏克蘭遭受攻擊最多的公司。

隨著烏克蘭地面、空中和水面戰爭的繼續,網路空間的戰爭也在繼續。將烏克蘭公司作為攻擊目標的實體似乎在試圖掩蓋資訊。烏克蘭遭受攻擊最多的前六大產業均為廣播、網際網路、網路媒體和出版業 — 這幾乎占所有針對烏克蘭的 DDoS 攻擊的 80%。

而戰爭的另一方,俄羅斯的銀行、金融機構和保險 (BFSI) 公司受到的攻擊最多。幾乎 50% 的 DDoS 攻擊的目標都是 BFSI Continue reading

2022년 2분기 DDoS 공격 동향

2022년 2분기 DDoS 보고서에 오신 것을 환영합니다. 이 보고서에는 Cloudflare 네트워크 전반에서 관찰된 DDoS 위협 환경에 대한 인사이트와 동향이 담겨있습니다. 이 보고서의 인터랙티브 버전을 Radar에서도 이용할 수 있습니다.

2분기에 우리는 Cloudflare에서 자동으로 감지하고 대처한 초당 2,600만 회의 요청이 이루어진 HTTPS DDoS 공격을 포함하여 사상 최대 규모의 공격을 경험했습니다. 또한, 우크라이나와 러시아에 대한 공격은 지속되고 있으며, 새로운 랜섬 DDoS 공격이 등장하였습니다.

주요 특징

우크라이나와 러시아에서의 인터넷

- 지상에서의 전쟁은 정보 전파를 겨냥하는 공격과 함께 이루어집니다.

- 2분기에 가장 많은 DDoS 공격이 이루어진 대상은 우크라이나의 방송매체 회사들이었습니다. 실제로, 가장 많은 공격을 받은 상위 6개 산업은 모두 온라인/인터넷 매체, 출판, 방송 분야에 속했습니다.

- 반면, 러시아의 경우 온라인 매체는 가장 많은 공격을 받은 산업 순위에서 3위로 처집니다. 온라인 매체보다 순위가 높은 산업을 보면 러시아의 은행, 금융 서비스 및 보험(BFSI) 회사들이 2분기에 공격을 가장 많이 받았고, 전체 응용 프로그램 계층 DDoS 공격의 거의 50%가 BFSI 분야를 대상으로 했습니다. 두 번째로 공격을 많이 받은 것은 암호화폐 회사들이었습니다.

개방형 인터넷이 러시아로 계속 유입되도록 하고 공격이 외부로 유출되지 않도록 차단하기 위해 Cloudflare에서 어떤 일을 하는지 자세히 읽어보세요.

랜섬 DDoS 공격

- 우리는 팬시 라자러스(Fancy Lazarus)라고 자칭하는 공격자들에 의한 랜섬 DDoS 공격이 급증하는 것을 목격했습니다.

- 2022년 6월에는 랜섬 공격 건수가 올해 들어 최고 수준으로 늘어났습니다. DDoS 공격을 경험한 Continue reading

Tendances en matière d’attaques DDoS au deuxième trimestre 2022

Bienvenue dans notre rapport consacré aux attaques DDoS survenues lors du deuxième trimestre 2022. Ce document présente des tendances et des statistiques relatives au panorama des menaces DDoS, telles qu'observées sur le réseau mondial de Cloudflare. Une version interactive de ce rapport est également disponible sur Radar.

Au cours du deuxième trimestre, nous avons observé certaines des plus vastes attaques jamais enregistrées, notamment une attaque DDoS HTTPS de 26 millions de requêtes par seconde, automatiquement détectée et atténuée par nos soins. Nous avons également constaté la poursuite des attaques contre l'Ukraine et la Russie, de même que l'émergence d'une nouvelle campagne d'attaques DDoS avec demande de rançon.

Points clés

Le réseau Internet russe et ukrainien

- La guerre au sol s'accompagne d'attaques ciblant la diffusion des informations.

- Les entreprises du secteur audiovisuel ukrainien ont été les plus visées par les attaques DDoS au deuxième trimestre. Pour tout dire, les six secteurs les plus attaqués se situent tous dans le domaine des médias en ligne/Internet, de la publication et de l'audiovisuel.

- À l'inverse, en Russie, les médias en ligne reculent de secteur le plus attaqué à la troisième place. Les entreprises du secteur de la banque, des assurances et des Continue reading

Entwicklung der DDoS-Bedrohungslandschaft im zweiten Quartal 2022

Willkommen zu unserem DDoS-Bericht für das zweite Quartal 2022. Darin beschreiben wir Trends hinsichtlich der DDoS-Bedrohungslandschaft, die sich im globalen Cloudflare-Netzwerk beobachten ließen, und die von uns daraus gezogenen Schlüsse. Eine interaktive Version dieses Berichts ist auch bei Radar verfügbar.

Im zweiten Quartal haben wir einige der größten Angriffen aller Zeiten registriert, darunter eine HTTPS-DDoS-Attacke mit 26 Millionen Anfragen pro Sekunde, die von Cloudflare automatisch erkannt und abgewehrt wurde. Neben fortgesetzten Angriffen auf die Ukraine und Russland hat sich zudem eine neue Ransom-DDoS-Angriffskampagne entwickelt.

Die wichtigsten Erkenntnisse auf einen Blick

Das Internet in Russland und der Ukraine

- Der Krieg wird nicht nur physisch, sondern auch in der digitalen Welt ausgefochten. Dort zielen die Angriffe darauf ab, die Verbreitung von Informationen zu verhindern.

- In der Ukraine waren im zweiten Quartal Rundfunk- und Medienunternehmen das häufigste Ziel von DDoS-Angriffen. Tatsächlich sind die sechs am stärkten betroffenen Branchen alle den Bereichen Online-/Internetmedien, Verlagswesen und Rundfunk zuzurechnen.

- Demgegenüber sind in Russland die Online-Medien unter den Angriffszielen auf den dritten Platz zurückgefallen. Spitzenreiter war das Segment Banken, Finanzdienstleistungen und Versicherungen (Banking, Financial Services and Insurance – BFSI). Fast 50 % aller DDoS-Angriffe auf Anwendungsschicht richteten sich gegen diese Sparte. Am zweithäufigsten wurden in Russland Continue reading

Tendencias de los ataques DDoS en el segundo trimestre de 2022

Te damos la bienvenida a nuestro informe sobre los ataques DDoS del segundo trimestre de 2022, que incluye nuevos datos y tendencias sobre el panorama de las amenazas DDoS, según lo observado en la red global de Cloudflare. Puedes consultar la versión interactiva de este informe en Radar.

En el segundo trimestre, hemos observado algunos de los mayores ataques hasta la fecha, incluido un ataque DDoS HTTPS de 26 millones de solicitudes por segundo que Cloudflare detectó y mitigó automáticamente. Además, continúan los ataques contra Ucrania y Rusia, al tiempo que ha aparecido una nueva campaña de ataques DDoS de rescate.

Aspectos destacados

Internet en Ucrania y Rusia

- La guerra en el terreno va acompañada de ataques dirigidos a la difusión de información.

- Las empresas de medios de comunicación de Ucrania fueron el blanco más común de ataques DDoS en el segundo trimestre. De hecho, los seis sectores que recibieron el mayor número de ataques pertenecen a los medios de comunicación en línea/Internet, la edición y audiovisual.

- En Rusia, por el contrario, los medios de comunicación en línea descendieron al tercer lugar como el sector más afectado. En los primeros puestos, las empresas de banca, servicios financieros y seguros (BFSI) Continue reading

Tendências de ataques DDoS no segundo trimestre de 2022

Bem-vindo ao nosso relatório de DDoS do segundo trimestre de 2022. Este relatório inclui informações e tendências sobre o cenário de ameaças DDoS — conforme observado em toda a Rede global da Cloudflare. Uma versão interativa deste relatório também está disponível no Radar.

No segundo trimestre deste ano, aconteceram os maiores ataques da história, incluindo um ataque DDoS por HTTPS de 26 milhões de solicitações por segundo que a Cloudflare detectou e mitigou de forma automática. Além disso, os ataques contra a Ucrânia e a Rússia continuam, ao mesmo tempo em que surgiu uma campanha de ataques DDoS com pedido de resgate.

Destaques

Internet na Ucrânia e na Rússia

- A guerra no terreno é acompanhada por ataques direcionados à distribuição de informações.

- Empresas de mídia de radiodifusão na Ucrânia foram as mais visadas por ataques DDoS no segundo trimestre. Na verdade, todos os seis principais setores vitimados estão na mídia on-line/internet, publicações e radiodifusão.

- Por outro lado, na Rússia, a mídia on-line deixou de ser o setor mais atacado e caiu para o terceiro lugar. No topo, estão empresas como bancos, serviços financeiros e seguros (BFSI, na sigla em inglês) do país, que foram as mais visadas no segundo trimestre; Continue reading

How To Maintain a Robust IT Talent Pool in a Post-pandemic Workforce

The Great Re-Evaluation has brought a renewed focus on hiring practices. Candidates expect more from the entire interview, hiring, and onboarding process.Internet disruptions overview for Q2 2022

Cloudflare operates in more than 270 cities in over 100 countries, where we interconnect with over 10,000 network providers in order to provide a broad range of services to millions of customers. The breadth of both our network and our customer base provides us with a unique perspective on Internet resilience, enabling us to observe the impact of Internet disruptions. In many cases, these disruptions can be attributed to a physical event, while in other cases, they are due to an intentional government-directed shutdown. In this post, we review selected Internet disruptions observed by Cloudflare during the second quarter of 2022, supported by traffic graphs from Cloudflare Radar and other internal Cloudflare tools, and grouped by associated cause or common geography.

Optic outages

This quarter, we saw the usual complement of damage to both terrestrial and submarine fiber-optic cables, including one that impacted multiple countries across thousands of miles, and another more localized outage that was due to an errant rodent.

Comcast

On April 25, Comcast subscribers in nearly 20 southwestern Florida cities experienced an outage, reportedly due to a fiber cut. The traffic impact of this cut is clearly visible in the graph below, with Cloudflare traffic Continue reading

Making Page Shield malicious code alerts more actionable

Last year during CIO week, we announced Page Shield in general availability. Today, we talk about improvements we’ve made to help Page Shield users focus on the highest impact scripts and get more value out of the product. In this post we go over improvements to script status, metadata and categorization.

What is Page Shield?

Page Shield protects website owners and visitors against malicious 3rd party JavaScript. JavaScript can be leveraged in a number of malicious ways: browser-side crypto mining, data exfiltration and malware injection to mention a few.

For example a single hijacked JavaScript can expose millions of user’s credit card details across a range of websites to a malicious actor. The bad actor would scrape details by leveraging a compromised JavaScript library, skimming inputs to a form and exfiltrating this to a 3rd party endpoint under their control.

Today Page Shield partially relies on Content Security Policies (CSP), a browser native framework that can be used to control and gain visibility of which scripts are allowed to load on pages (while also reporting on any violations). We use these violation reports to provide detailed information in the Cloudflare dashboard regarding scripts being loaded by end-user browsers.

Page Shield Continue reading

Modifying Maximum Throughput of Catalyst8000v

The Catalyst8000v is Cisco’s virtual version of the Catalyst 8000 platform. It is the go to platform and a replacement of previous products such as CSR1000v, vEdge cloud, and ISRV. When installing a Catalyst8000v, it comes with a builtin shaper setting the maximum throughput to 10 Mbit/s as can be seen below:

R1#show platform hardware throughput level The current throughput level is 10000 kb/s

This is most likely enough to perform labbing but obviously not enough to run production workloads. You may be familiar with Smart Licensing on Cisco. Previously, licensing was enforced and it wasn’t possible to modify throughput without first applying a license to a device. In releases 17.3.2 and later, Cisco started implementing Smart Licensing Using Policy which essentially means that most of the licenses are trust-based and you only have to report your usage. There are exceptions, such as export-controlled licenses like HSEC which is for high speed crypto, anything above 250 Mbit/s of crypto. To modify the maximum throughput of Catalyst8000v, follow these steps:

R1#conf t Enter configuration commands, one per line. End with CNTL/Z. R1(config)#platform hardware throughput level MB ? 100 Mbps 1000 Mbps 10000 Mbps 15 Mbps 25 Mbps 250 Mbps 2500 Continue reading

Stop Ignoring Your Records Problem, The Fix May Be Hidden in Plain Sight

As businesses digitally transform and undertake process optimization projects, IT can help address records management by building in records compliance and classification and automating a standardized retention and disposal schedule for them.Random. How to Choose Your Desktop Operating System for Network Automation Development

Hello my friend,

After writing quite long and complicated previous blogpost about CI/CD with GitHub, I need some therapy to write something light and chill. I decided to choose the setup of the working space for development and utilisation of the network automation and, in general, network design and operations. Though I don’t pretend to be absolutely objective and unbiased, as it is simply not possible, I intend to share some observations I did from my own experience and discussions with our network automation students, which I hope will be interesting for you.

2

3

4

5

retrieval system, or transmitted in any form or by any

means, electronic, mechanical or photocopying, recording,

or otherwise, for commercial purposes without the

prior permission of the author.

Why Is It Important?

During our Zero-to-Hero Network Automation Trainings, and other trainings as well, we talk a lot about choice of tools to build automation solutions: they shall be fit for purpose and easy to use. However, in addition to that, you should also feel a fun, when you utilise them. It may sound odd, as we are Continue reading

Is Security A Feature Or A Product?

This post originally appeared on the Packet Pushers’ Ignition site on July 9, 2019. Premise: I would be cautious about a vendor who sells security as a product or a critical/primary feature. Security-as-a-product is coming to an end. We need to return to making the things we already have work efficiently. There is only so […]

The post Is Security A Feature Or A Product? appeared first on Packet Pushers.

Saying “Yes” the Right Way

If only I had known how hard it was to say “no” to someone. Based on the response that my post about declining things had gotten I’d say there are a lot of opinions on the subject. Some of them were positive and talked about how hard it is to decline things. Others told me I was stupid because you can’t say no to your boss. I did, however, get a direct message from Paul Lampron (@Networkified) that said I should have a follow up post about saying yes in a responsible manner.

Positively Perfect

The first thing you have to understand about the act of asking something is that we’re not all wired the same way when it comes to saying yes. I realize that article is over a decade old at this point but the ideas in it remain valid, as does this similar one from the Guardian. Depending on your personality or how you were raised you may not have the outcome you were expecting when you ask.

Let me give you a quick personal example. I was raised with a southern style mentality that involves not just coming out and asking for something. You Continue reading

A July 4 technical reading list

Here’s a short list of recent technical blog posts to give you something to read today.

Internet Explorer, we hardly knew ye

Microsoft has announced the end-of-life for the venerable Internet Explorer browser. Here we take a look at the demise of IE and the rise of the Edge browser. And we investigate how many bots on the Internet continue to impersonate Internet Explorer versions that have long since been replaced.

Live-patching security vulnerabilities inside the Linux kernel with eBPF Linux Security Module

Looking for something with a lot of technical detail? Look no further than this blog about live-patching the Linux kernel using eBPF. Code, Makefiles and more within!

Hertzbleed explained

Feeling mathematical? Or just need a dose of CPU-level antics? Look no further than this deep explainer about how CPU frequency scaling leads to a nasty side channel affecting cryptographic algorithms.

Early Hints update: How Cloudflare, Google, and Shopify are working together to build a faster Internet for everyone

The HTTP standard for Early Hints shows a lot of promise. How much? In this blog post, we dig into data about Early Hints in the real world and show how much faster the web is with it.