OpenClaw Ruined AI and It Makes Me Happy

The biggest AI story of 2026 isn’t the growing need for electrical power or the ridiculous way the market sold out for RAM based on a letter of intent to acquire. No, the biggest AI story of the year so far is how a scrappy little project completely upset the AI apple cart. OpenClaw (nee ClaudeBot, nee OpenMolt) set the world on fire. And it destroyed how people were trying to direct AI. I’m sitting over here giggling about it.

Round The Clock

The basics of OpenClaw are simple enough. You have a system of agents that do things. It can read your texts or email and triage the flow of information. It can send you a text summary of the news or the weather every morning. But it can also be configured to monitor things as they arrive to deal with them on the fly. That’s where the real narrative shift has happened.

When you open a browser window to talk to an LLM you are creating a session that has a finite time limit. You are saying that you are going to work on a project for a specific period of time and that’s that. Once you complete Continue reading

NAN122: From Anxiety to Empowerment: Building Confidence into Machine‑Speed Network Updates (Sponsored)

Network teams are being asked to move faster than ever as automation and AI-driven workflows increase the volume and frequency of network changes. In this episode, sponsored by Cisco, we explore how modern network operating systems make zero-downtime, zero-stress updates possible, even at machine speed. We’ll break down three key capabilities: Atomic Config Replace (ACR),... Read more »Browser Run: now running on Cloudflare Containers, it’s faster and more scalable

We’ve enabled higher usage limits, faster performance, and better reliability for Browser Run by rebuilding on top of Cloudflare’s Containers.

You can now spin up 60 browsers per minute via the Workers binding and run up to 120 concurrently — 4x the previous limit. Also, Quick Action response times dropped more than 50%. You don't need to change anything: these improvements are live today. On top of that, we’re shipping fixes and new features faster than before. Read on to learn how we did it and see the data.

Browser Run enables developers to programmatically control and interact with headless browser instances running on Cloudflare’s global network. That’s useful for end-to-end testing of web applications, securely investigating suspicious URLs, and leveraging how browsers can easily render PDF documents, amongst other quick actions like capturing screenshots and extracting content. More recently, it’s become a critical enabler of AI agents to interact with the web. We’re building Browser Run to be the go-to platform to responsibly utilize automated browsers securely at massive scale.

Before adopting Cloudflare Containers, we shared infrastructure with Browser Isolation (BISO). While technically similar, BISO’s larger container images slowed Continue reading

Hands-On Introduction to SR-MPLS

The second demo1 I did during the Segment Routing workshop @ ITNOG10 illustrated how easy it is to set up and explore a small SR-MPLS network with netlab. The lab topology described a small three-router network (you need three routers to see “true” labels besides the penultimate-hop popping ones):

Meet NFA v26.02, featuring BGP visibility tools, extended threshold matching, and SNMP reporting enhancements.

BGP diagnostics and visibility tools

The biggest addition in v26.02 is a complete set of BGP diagnostics and visibility tools. These give network administrators new insights into routing behavior directly within NFA. The new BGP diagnostics panel introduces ping and traceroute checks, allowing engineers to run connectivity and path diagnostics without leaving the NFA interface. Additionally, a BGP Data Lookup feature enables direct queries against NFA’s internal BGP tables, supporting exact-match and more-specific match modes for precise prefix investigations. Finally, BGP History Lookup provides access to historical route events, including key attributes such as prefix, next-hop, AS path, and more. This makes it easier to trace routing changes over time and connect them with traffic events.

We’re excited to announce the release of Noction Flow Analyzer v26.02. This version includes a focused set of improvements that enhance Continue reading

Pytest for Automated Network Testing (II)

In part one, we covered the basics of pytest and wrote our first network tests. We tested BGP and OSPF on a single device, then extended it to multiple devices. We also looked at parametrization and how it helps treat each device and each neighbour as an independent test.

In this part, we will cover inventory management with Nornir and pytest fixtures.

Nornir Introduction

Nornir is a Python automation framework designed for network engineers. Instead of writing your own logic to connect to devices, manage inventory, and run tasks in parallel, Nornir handles all of that for you. We have a dedicated series on Nornir, which you can check out here, so we are not going to do a deep dive in this post.

The reason we are using Nornir here is for inventory and task management. Instead of hardcoding a list of IP addresses in our collection file, we define our devices in a hosts file with groups, credentials, and Continue reading

PP109: ThreatLocker Enforces Zero Trust With Strict Application Control (Sponsored)

ThreatLocker takes an opinionated approach to Zero Trust. The company, our sponsor for today’s episode, starts with application control. It uses endpoint software that runs on PCs and servers to allow or deny applications to run. It can also monitor and control the behavior of allowed applications. ThreatLocker has extended its platform to include network... Read more »HW078: Companion Tools for Wireless Validation Surveys

Guest Nick Turner joins Keith to discuss the technicalities of Wi-Fi validation survey file structures. Nick has spent a lot of time deep in the weeds of .ESX files, and he’s here to share workflows and utilities you can use to help navigate, migrate, back up, and operationalize .ESX files. If you’ve ever wondered exactly... Read more »Securing the Untrusted Agentic Development Layer

Join us to learn how to architect a development environment where your builders and their agents can move fast and securely.When “idle” isn’t idle: how a Linux kernel optimization became a QUIC bug

CUBIC, standardized in RFC 9438, is the default congestion controller in Linux, and as a result governs how most TCP and QUIC connections on the public Internet probe for available bandwidth, back off when they detect loss, and recover afterward. At Cloudflare, our open-source implementation of QUIC, quiche, uses CUBIC as its default congestion controller, meaning this code is in the critical path for a significant share of the traffic we serve.

In this post, we’ll tell the story of a bug in which CUBIC's congestion window (cwnd) gets permanently pinned at its minimum and never recovers from a congestion collapse event.

The story starts with a Linux kernel change aimed at bringing CUBIC into line with the app-limited exclusion described in RFC 9438 §4.2-12 — a fix to a real problem in TCP that, when ported to our QUIC implementation, surfaced unexpected behaviors in quiche. It has a happy ending: an elegant (near-)one-line fix that broke the cycle.

Before we dive into the core problem, a quick refresher on Congestion Control Algorithms (CCAs) may help to set the stage.

The central knob a CCA turns is the congestion window (cwnd Continue reading

MUST READ: The Future of Everything is Lies, I Guess

Kyle Kingsbury published a long (10-part) article about his frustrations with AI, aptly named The Future of Everything is Lies, I Guess.

Regardless of where you are on the skeptic-to-fanboy spectrum, I would highly recommend you read it, even if you believe you’ll disagree with everything he wrote.

NB574: Extreme’s New AI Agent Nudges You; Cloudflare Evaporates 20% of Employees

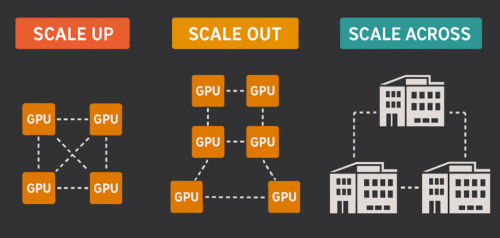

Take a Network Break! There’s a Red Alert for Apache Polaris with four CVEs that could enable unauthorized read/write access. On the news front, Lumen is spending $475 million in cash for Alkira to extend its NaaS offering across public clouds. Extreme Networks announces Wi-Fi 7 APs and new features in its Platform ONE management... Read more »Understanding Scale-Out, Scale-Up, and Scale-Across Networking

Modern AI and HPC workloads place extraordinary demands on the underlying network infrastructure. And as network engineers, we are often pulled into conversations about GPU clusters, maybe without a clear…

The post Understanding Scale-Out, Scale-Up, and Scale-Across Networking appeared first on JTnetwork.io.

Reorganized ipSpace.net Segment Routing Resources

I created nine sample SR-MPLS topologies for the ITNOG 10 SR-MPLS workshop, and of course, we ran out of time. I plan to cover those topologies and resulting printouts in a series of blog posts; to prepare for those, I cleaned up and reorganized the Segment Routing blog category, which is now split into two:

Hope you’ll find them useful! Also, if you know of other non-vendor Segment Routing resources, please leave a comment, email me, or submit a pull request.

Quantum safe amateur radio secure shell

I’ve previously pointed out that the AX.25 implementation in the kernel is pretty poor. It’s not really being maintained, and even when it gets fixes after I reported it, with people running LTS OSs it can take like 5 years before before the fix actually reaches users, if ever. So when writing applications, you still have to work around kernel bugs from a decade ago. This makes it kind of pointless to upstream patches.

The exception is security patches, and reading between the lines of why the AX.25 code is now being removed from the kernel, it sounds like maybe some LLM (like the looming “Mythos” and the related Glasswing) may have found some severe problems. But even if there aren’t any known security problems yet, having code is now more of a liability than ever. Code needs to be removed, or taken responsibility of. (tangent about ffmpeg at the bottom of this post)

With the kernel code removed, say goodbye to the old walkthrough.

The new API

Well, not “new”, per se, but “replacement”.

With the socket based API about to be gone, we need some other way for applications to send packets and Continue reading