TNO062: SONiC for Open Networking with Jeff Doyle

Scott Robohn is joined by networking legend Jeff Doyle to help us understand SONiC: Software for Open Networking in the Cloud. SONiC is an open-source network operating system and has been adopted by hyperscalers to run some of the world’s largest data centers. But SONiC can also be used by enterprises and service providers. Jeff... Read more »HN826: An Inside Look at Palo Alto Networks Prisma Browser for Business (Sponsored)

A Mastercard survey reveals that 46% of small and medium businesses have experienced a cyberattack, and nearly 20% of those that suffered an attack were then forced to file for bankruptcy or close their business. Ethan and Drew along with guest Shivam Srivastava discuss a new offering from today’s sponsor, Palo Alto Networks: Prisma Browser... Read more »SecureCRT to SuperPutty – Migrate Your Sessions with One Python Script

If you’ve ever had to move a large session library from SecureCRT to SuperPutty, you know the pain — there’s no built-in migration path, and manually re-entering dozens (or hundreds) of sessions is a miserable afternoon. I wrote SCRT_2_SPUTTY to handle it automatically. Point it at your SecureCRT XML export, and it spits out a...

The post SecureCRT to SuperPutty – Migrate Your Sessions with One Python Script first appeared on Fryguy's Blog.Hedge 304: Deep Dive into a Network Master’s Program

If you’ve ever been curious about what an advanced degree in network engineering looks like, you’ll want to join us for this episode of the Hedge. Levi Perigo from the University of Colorado at Boulder joins Tom and Russ to talk through what earning a Master’s in Networking involves and what kinds of things you would learn.

download

Technology Short Take 195

Welcome to Technology Short Take #195! It wasn’t planned this way, but it seems like this Tech Short Take is heavily slanted toward AI/LLM-related articles and posts. Topics like security concerns around improper storage of API keys, how developers are using AI tools, spyware getting installed with AI assistants, and how AI/LLMs might be creating barriers to entry for new IT profesionals are all on tap this time around. I hope this unintentional focus doesn’t prevent you from finding something useful!

Networking

- Ivan Pepelnjak takes readers through the process of generating partial devices configurations with

netlab. - It’s an older blog post, but it checks out—have a look at this walkthrough of Containerlab and Netlab. (Hat tip to Ivan for the link. Also, bonus points if you understood the reference at the start of this paragraph.)

- Ah, MTU issues…they don’t go away if you migrate to Kubernetes.

Security

- Sean Gallagher and Omid Mirzaei from Cisco Talos discuss how threat actors are misusing AI workflow automation.

- Before this article on vulnerability triage, I’d never heard of “brocards.”

- Kelby Ludwig reminds folks that you don’t want long-lived keys.

- Davi Ottenheimer tackles the claims about risk from Anthropic’s Mythos preview. Continue reading

Public Videos: Segment Routing 101

In the spring of 2017, Jeff Tantsura, the IETF Routing Area chair, delivered a short “Introduction to Segment Routing” webinar. In mid-April 2026, we had ~100 people at ITNOG 10 attending the excellent “Segment Routing: From Theory to Practice” workshop by the great Tiziano Tofoni. The future is obviously not evenly distributed.

If you’re in the early stages of your Segment Routing journey, you might appreciate the videos from Jeff’s webinar; you can now watch them without an ipSpace.net account.

Building for the future

This afternoon, we sent the following email to our global team. One of our core values at Cloudflare is transparency, and we believe it's important that you hear this directly from us because it’s a major moment at Cloudflare.

Team:

We are writing to let you know directly that we’ve made the decision to reduce Cloudflare’s workforce by more than 1,100 employees globally.

The way we work at Cloudflare has fundamentally changed. We don’t just build and sell AI tools and platforms. We are our own most demanding customer. Cloudflare’s usage of AI has increased by more than 600% in the last three months alone. Employees across the company from engineering to HR to finance to marketing run thousands of AI agent sessions each day to get their work done. That means we have to be intentional in how we architect our company for the agentic AI era in order to supercharge the value we deliver to our customers and to honor our mission to help build a better Internet for everyone, everywhere.

Today is a hard day. This decision unfortunately means saying goodbye to teammates who have contributed meaningfully to our mission and to building Cloudflare Continue reading

LIU014: Linda Haviv: From Philosophy Major to AI Engineer

Alexis and Kevin sit down with Linda Haviv, an AI/ML Engineer and founder of Coding Crystals. Linda is known for making AI infrastructure accessible, and for a career path that went from philosophy student to professional singer to self-taught developer to AI engineer. Together they discuss the difference between AI infrastructure and AI engineering, the... Read more »UniFi Network Health Report – Open Source Python Tool (v1.0.0)

If you run a self-hosted Ubiquiti UniFi network — whether it’s a home lab, small business, or multi-site setup — you know the UniFi dashboard is great for real-time monitoring but falls short when you want a clean summary you can save, share, or review later. I built UniFi Network Health Report to fill that...

The post UniFi Network Health Report – Open Source Python Tool (v1.0.0) first appeared on Fryguy's Blog.How Cloudflare responded to the “Copy Fail” Linux vulnerability

On April 29, 2026, a Linux kernel local privilege escalation vulnerability was publicly disclosed under the name "Copy Fail" (CVE-2026-31431). Cloudflare’s Security and Engineering teams began assessing the vulnerability as soon as it was disclosed. We reviewed the exploit technique, evaluated exposure across our infrastructure, and validated that our existing behavioral detections could identify the exploit pattern within minutes.

There was no impact to the Cloudflare environment, no customer data was at risk, and no services were disrupted at any point. Read on to learn how our preparedness paid off.

Cloudflare operates a global Linux server infrastructure at an immense scale, with datacenters located across 330 cities. We maintain a custom Linux kernel build based on the community's Long-Term Support (LTS) versions to manage updates effectively at this volume. At any given time, we may utilize multiple LTS versions from various series, such as 6.12 or 6.18, which benefit from extended update periods.

The community regularly merges and releases security and stability updates which trigger an automated job to generate a new internal kernel build approximately every week. These builds undergo testing in our staging data centers to Continue reading

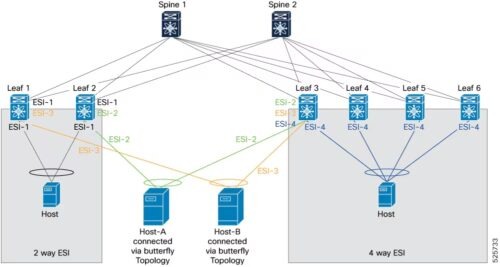

VXLAN EVPN ESI multi-homing on Nexus 9000

For years, multi-homing in VXLAN BGP/EVPN fabrics with Cisco Nexus switches meant one thing: vPC. It works very well; two-switch redundancy, familiar operational model, no need to deeply understand EVPN’s…

The post VXLAN EVPN ESI multi-homing on Nexus 9000 appeared first on JTnetwork.io.

VXLAN EVPN ESI multi-homing on Nexus 9000

For years, multi-homing in VXLAN BGP/EVPN fabrics with Cisco Nexus switches meant one thing: vPC. It works very well; two-switch redundancy, familiar operational model, no need to deeply understand EVPN’s…

The post VXLAN EVPN ESI multi-homing on Nexus 9000 appeared first on AboutNetworks.net.

On ARP and MAC Aging Timers

Naveen Kumar Devaraj mentioned an interesting fact in his EVPN-related comment:

The EOS default ARP timeout is 4 hours, and MAC aging is 5 minutes.

Arista is not the only platform using these default values; did you ever wonder where they came from?