Cloudflare Zaraz launches new privacy features in response to French CNIL standards

Last week, the French national data protection authority (the Commission Nationale de l'informatique et des Libertés or “CNIL”), published guidelines for what it considers to be a GDPR-compliant way of loading Google Analytics and similar marketing technology tools. The CNIL published these guidelines following notices that the CNIL and other data protection authorities issued to several organizations using Google Analytics stating that such use resulted in impermissible data transfers to the United States. Today, we are excited to announce a set of features and a practical step-by-step guide for using Zaraz that we believe will help organizations continue to use Google Analytics and similar tools in a way that will help protect end user privacy and avoid sending EU personal data to the United States. And the best part? It takes less than a minute.

Enter Cloudflare Zaraz.

The new Zaraz privacy features

What we are releasing today is a new set of privacy features to help our customers enhance end user privacy. Starting today, on the Zaraz dashboard, you can apply the following configurations:

- Remove URL query parameters: when toggled-on, Zaraz will remove all query parameters from a URL that is reported to a third-party server. It will turn

Continue reading

Day Two Cloud 151: How To Tell If Infrastructure As Code Is Working For You

Today's Day Two Cloud podcast gets into Infrastructure as Code (IaC) and how to evaluate it so that IaC practices fit your organization, your processes, the infrastructure you're trying to support, and so on. Our guest lays out some guideposts to help you understand if IaC is working for you.Cisco Live 2022: A Kinder, Gentler, Cloudier Monster?

Cisco Live 2022 in Las Vegas kicked off with executive keynotes, including an address from CEO Chuck Robbins. My takeaways from the keynotes from Tuesday, June 14th are: Cisco knows it has to work harder to keep customers Cisco has big cloud ambitions Meraki is one key to Cisco’s cloud & simplicity goals Cisco Has […]

The post Cisco Live 2022: A Kinder, Gentler, Cloudier Monster? appeared first on Packet Pushers.

Internal Network Security Mistakes to Avoid

Network security begins at home. Here's how to effectively secure threats from within your organization.Hedge 134: Ten Things

One of the many reasons engineers should work for a vendor, consulting company, or someone other than a single network operator at some point in their career is to develop a larger view of network operations. What are common ways of doing things? What are uncommon ways? In what ways is every network broken? Over time, if you see enough networks, you start seeing common themes and ideas. Just like history, networks might not always be the same, but the problems we all encounter often rhyme. Ken Calenza joins Tom Ammon, Eyvonne Sharp, and Russ White to discuss these common traits—ten things I know about your network.

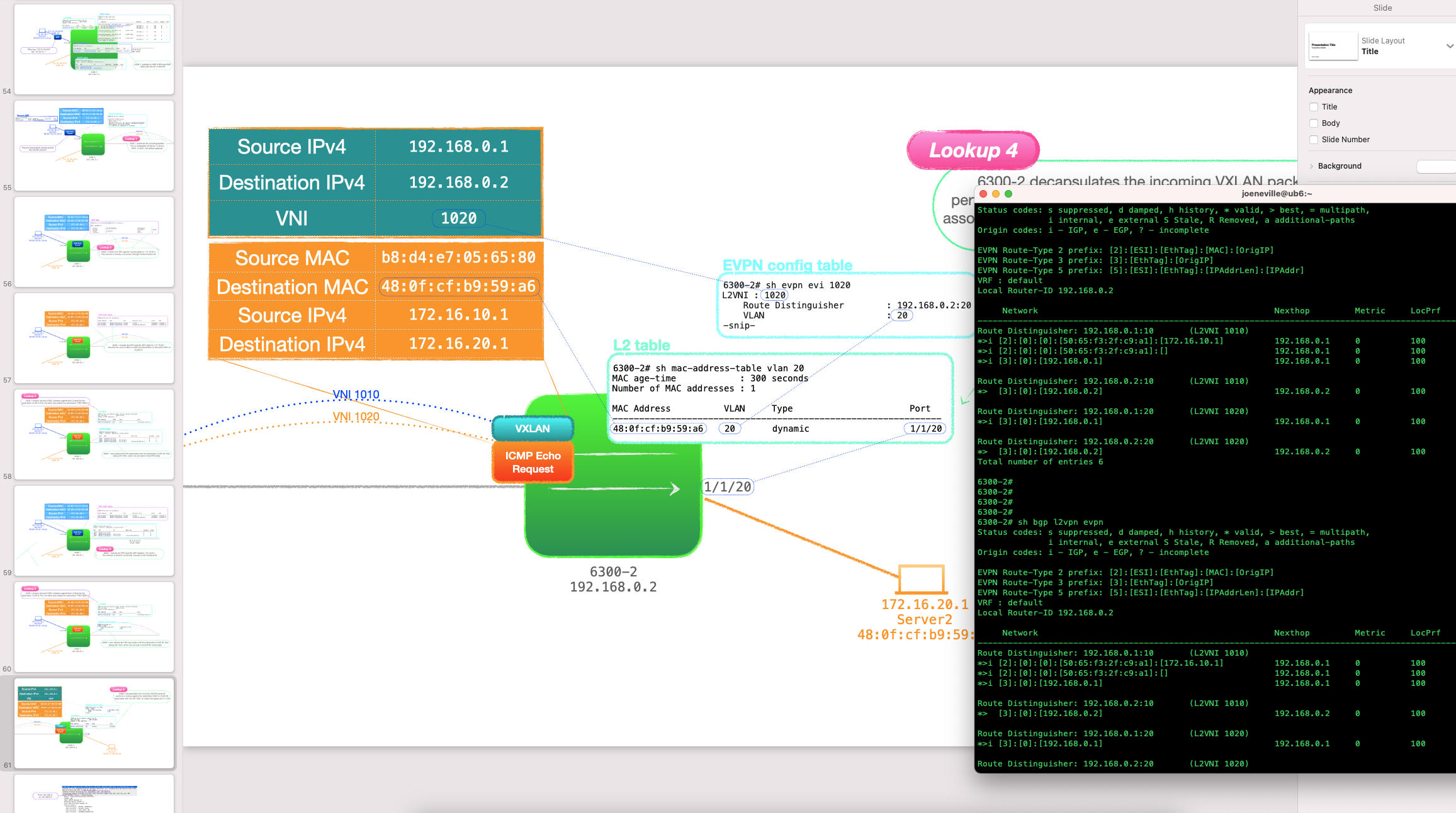

EVPN-VXLAN Explainer 5 – Layer 3 with Asymmetrical IRB

Thus far, this series of posts have all been about Layer 2 over Layer 3 models; the customer ethernet frames encapsulated in UDP, traversing L3 networks. The routing has been confined underlay, the customer traffic has stayed within the same network.

No longer! In this post, things start getting a little more interesting, as we look at routing the customer traffic with an EVPN feature called Integrated Routing and Bridging, or IRB.

- First we look at the concept of routing in VXLAN networks.

- Then we have an in-depth look at asymmetrical IRB (I'll be dealing with symmetrical in the next post).

✅ L2 is intra-subnet, L3 is inter-subnet

📥 Intra-subnet

To define terms, when I say 'intra-subnet', that is L2 traffic transferred between nodes in the same subnet.

📤 Inter-subnet

'Inter-subnet' refers to a traffic flow that traverses subnet boundaries.

☎️ The Centralized IP L3 Gateways of Old

- With VXLAN networks in the past, inter-subnet communication was often performed by a centralized, IP only, gateway on behalf of the rest of the network.

- Traffic from customer-side networks would need to be sent to this central device for routing, which often created inefficient traffic flows, and possibly a bandwidth choke-point.

- Imagine Continue reading

Beware of Vendors Bringing White Papers

A few weeks ago I wrote about tradeoffs vendors have to make when designing data center switching ASICs, followed by another blog post discussing how to select the ASICs for various roles in data center fabrics.

You REALLY SHOULD read the two blog posts before moving on; here’s the buffer-related TL&DR for those of you ignoring my advice ;)

Beware of Vendors Bringing White Papers

A few weeks ago I wrote about tradeoffs vendors have to make when designing data center switching ASICs, followed by another blog post discussing how to select the ASICs for various roles in data center fabrics.

You REALLY SHOULD read the two blog posts before moving on; here’s the buffer-related TL&DR for those of you ignoring my advice ;)

Securing cloud workloads in 5 easy steps

As organizations transition from monolithic services in traditional data centers to microservices architecture in a public cloud, security becomes a bottleneck and causes delays in achieving business goals. Traditional security paradigms based on perimeter-driven firewalls do not scale for communication between workloads within the cluster and 3rd-party APIs outside the cluster. The traditional paradigm also does not provide granular access controls to the workloads and zero-trust architecture, leaving cloud-native applications with a larger attack surface.

Calico Cloud offers an easy 5-step process for fast-tracking your organization’s cloud-native application journey by making security a business enabler while mitigating risk.

Step 1: Visibility

Gaining visibility into workload-to-workload communication with all metadata context intact is one of the biggest challenges when it comes to deploying microservices. You can’t apply security controls to what you can’t see. The traffic is not just flowing from a client to a server in this new cloud native distributed architecture but also between namespaces that reside between many nodes, causing flow proliferation. With Calico Cloud, you get a dynamic visualization of all traffic flowing through your network in an easy-to-read UI.

Example 1: You can view all the inside and outside (east-west and north-south) connections directly from Calico’s Continue reading

3 SD-WAN Challenges and Solutions

Points to consider when selecting an SD-WAN solution include WAN/LAN branch architecture, deployment and service provisioning, and centralized management.Heavy Networking 635: Unified Network Fabrics With Juniper Apstra (Sponsored)

In today’s sponsored Heavy Networking we talk to Juniper Apstra about how how Apstra delivers on unified data center operations, why fabrics are everywhere, how Apstra differs from other automation and intent solutions, and more. Our guest is Mansour Karam, VP of Product Management.

The post Heavy Networking 635: Unified Network Fabrics With Juniper Apstra (Sponsored) appeared first on Packet Pushers.