First steps with pyATS

Have you ever wanted to compare the operational state of a bunch of network devices between two specific times? Not only if the interfaces are up or down, but the number and status of BGP peers, the number of prefixes received, the number of entries into a MAC-address table, etc? This is something quite laborious to do with classical NMS or Do-It-Yourself scripts. And this is where pyATS can become a real asset. Here are my first steps with pyATS: Network Test & Automation Solution. What is pyATS? pyATS (pronounced…

The post First steps with pyATS appeared first on AboutNetworks.net.

Mikrotik RouterOS and VyOS Added to netsim-tools

Stefano Sasso took my “Don’t complain, submit a PR” advice seriously and did a wonderful job adding support for Mikrotik RouterOS and VyOS to netsim-tools, increasing the number of supported platforms to twelve. His additions are available in release 1.0.2 which also includes:

- Automatic creation of groups based on BGP AS numbers

- Group-wide node attributes

- Experimental support for Python plugins

Interested? Start with tutorials and installation guide which includes lab building instructions.

Mikrotik RouterOS and VyOS Added to netsim-tools

Stefano Sasso took my “Don’t complain, submit a PR” advice seriously and did a wonderful job adding support for Mikrotik RouterOS and VyOS to netsim-tools, increasing the number of supported platforms to twelve. His additions are available in release 1.0.2 which also includes:

- Automatic creation of groups based on BGP AS numbers

- Group-wide node attributes

- Experimental support for Python plugins

Interested? Start with tutorials and installation guide which includes lab building instructions.

Answering Broadband Questions in the Infrastructure Investment Bill

The recently passed Infrastructure Investment and Jobs Act directs funds, foresight, and structure to closing the digital divide.Exams 1. Cisco Certified DevNet Expert Lab Exam Overview

Hello my friend,

Recently Cisco has finally announced the expert level certification in their automation and software development track named DevNet. The idea behind this certification track is to promote the DevOps mindset and culture in the traditional network engineering and to give the necessary theoretical knowledge and practical skills for engineers to start developing, maintaining and supporting network automation in Cisco Networks.

2

3

4

5

retrieval system, or transmitted in any form or by any

means, electronic, mechanical or photocopying, recording,

or otherwise, for commercial purposes without the

prior permission of the author.

Brief Description

This Lab exam allows the one to earn the Expert level certification, provided the individual has already passed the theoretical exam DevCore (350-901 Developing Applications using Cisco Core Platforms and APIs). The expert level certification from Cisco is often very attractive for networking engineers as it generally well-respected across the industry and acts as a guideline of what engineer at a certain level is expected to know. The DevNet certification is yet relatively young and finding its path, as it steps in the area where it is competing with some Continue reading

PSA: Virtual Interfaces (in ESXi) Aren’t Limited To Reported Interface Speeds

There is an incorrect assumption that comes up from time to time, one that I shared for a while, is that VMware ESXi virtual NIC (vNIC) interfaces are limited to their “speed”.

In my stand-alone ESXi 7.0 installation, I have two options for NICs: vxnet3 and e1000. The vmxnet3 interface shows up at 10 Gigabit on the VM, and the e1000 shows up as a 1 Gigabit interface. Let’s test them both.

One test system is a Rocky Linux installation, the other is a Centos 8 (RIP Centos). They’re both on the same ESXi host on the same virtual switch. The test program is iperf3, installed from the default package repositories. If you want to test this on your own, it really doesn’t matter which OS you use, as long as its decently recent and they’re on the same vSwitch. I’m not optimizing for throughput, just putting enough power to try to exceed the reported link speed.

The ESXi host is 7.0 running on an older Intel Xeon E3 with 4 cores (no hyperthreading).

Running iperf3 on the vmxnet3 interfaces, that show up as 10 Gigabit on the Rocky VM:

[ 1.323917] vmxnet3 0000:0b:00.0 ens192: renamed Continue reading

Git as a Source of Truth for Network Automation

In Git as a source of truth for network automation, Vincent Bernat explained why they decided to use Git-managed YAML files as the source of truth in their network automation project instead of relying on a database-backed GUI/API product like NetBox.

Their decision process was pretty close to what I explained in Data Stores and Source of Truth parts of Network Automation Concepts webinar: you need change logging, auditing, reviews, and all-or-nothing transactions, and most IPAM/CMDB products have none of those.

On a more positive side, NetBox (and its fork, Nautobot) has change logging (HT: Leo Kirchner) and things are getting much better with Nautobot Version Control plugin. Stay tuned ;)

Git as a Source of Truth for Network Automation

In Git as a source of truth for network automation, Vincent Bernat explained why they decided to use Git-managed YAML files as the source of truth in their network automation project instead of relying on a database-backed GUI/API product like NetBox.

Their decision process was pretty close to what I explained in Data Stores and Source of Truth parts of Network Automation Concepts webinar: you need change logging, auditing, reviews, and all-or-nothing transactions, and most IPAM/CMDB products have none of those.

On a more positive side, NetBox (and its fork, Nautobot) has change logging (HT: Leo Kirchner) and things are getting much better with Nautobot Version Control plugin. Stay tuned ;)

How to Improve Your Network Security

Information is powerful. As our reliance on technology increases, more and more companies are storing classified data on their online networks. Cloud computing is becoming the new norm – but so is cybercrime. When such delicate information is stored on computer networks, it is important for the data to be analyzed, controlled, and protected accordingly. Especially in case of financial matters, it is essential to protect financial information to prevent it from getting into the wrong hands.

Data security is becoming a great deal of concern in the modern world. While companies are paying millions of dollars to ensure network security, their data is still at risk of breaches and cyberthreats. If the network security of a company is compromised, the business risks losing billions of dollars since it betrays the trust of shareholders and customers alike.

If you are unsure regarding the safety of your network and company data, then you need to take a few extra steps to ensure network security. Here are a few simple, cost-effective steps that you can follow to protect your company data from any potential breaches:

1. Password Strategy

Every data network has a strong password encryption to it. However, one of the Continue reading

Worth Reading: Load Balancing on Network Devices

Christopher Hart wrote a great blog post explaining the fundamentals of how packet load balancing works on network devices. Enjoy.

For more details, watch the Multipath Forwarding part of Advanced Routing Protocol Topics section of How Networks Really Work webinar.

Worth Reading: Load Balancing on Network Devices

Christopher Hart wrote a great blog post explaining the fundamentals of how packet load balancing works on network devices. Enjoy.

For more details, watch the Multipath Forwarding part of Advanced Routing Protocol Topics section of How Networks Really Work webinar.

Achieving Net Optimization via Automation and Modernization

Organizations should assess if their current network configuration and compliance management tools and processes are holding them back from true network optimization.A Gift Guide for Sanity In Your Home IT Life

If you’re reading my blog you’re probably the designated IT person for your family or immediate friend group. Just like doctors that get called for every little scrape or plumbers that get the nod when something isn’t draining over the holidays, you are the one that gets an email or a text message when something pops up that isn’t “right” or has a weird error message. These kinds of engagements are hard because you can’t just walk away from them and you’re likely not getting paid. So how can you be the Designated Computer Friend and still keep your sanity this holiday season?

The answer, dear reader, is gifts. If you’re struggling to find something to give your friends that says “I like you but I also want to reduce the number of times that you call me about your computer problems” then you should definitely read on for more info! Note that I’m not going to fill this post will affiliate links or plug products that have sponsored anything. Instead, I’m going to just share the classes or types of devices that I think are the best way to get control of things.

Step 1: Infrastructure Upgrades

When you Continue reading

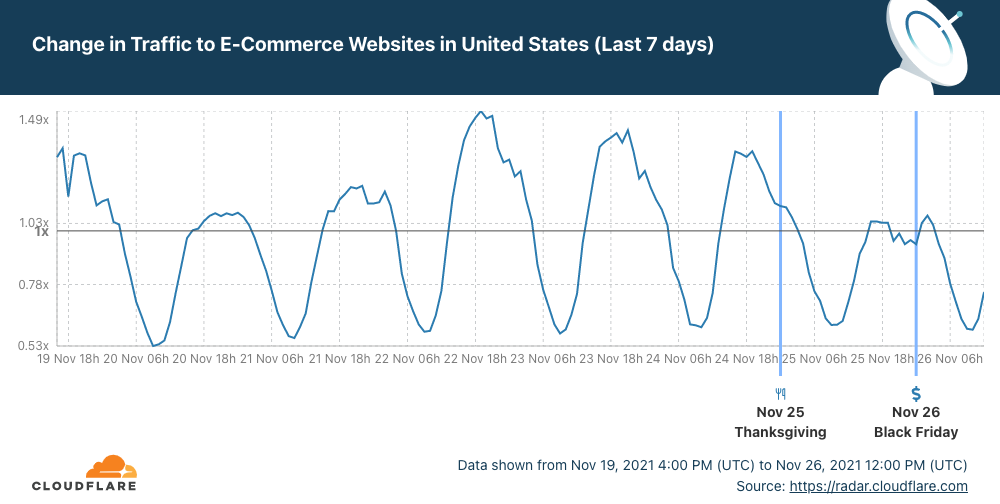

How the US paused shopping (and browsing) for Thanksgiving

So, if you like to keep up with the tradition in the United States you and your family yesterday (November 25, 2021) celebrated Thanksgiving. So on a special day, with family gatherings for many and with a lot of cooking if you’re into the tradition (roast turkey, stuffing and pumpkin pie), it makes sense that different Internet patterns show up on Cloudflare Radar.

First, let’s look at shopping habits. After a busy Monday, Tuesday and Wednesday, online shopping paused for Thanksgiving Day and dipped at lunchtime. So in a very good week for e-Commerce, Thanksgiving was an exception, especially at the extended lunchtime.

Now, let’s focus on Internet traffic at the time of the Thanksgiving Dinner. First, what time is that? Every family is different, but a 2018 survey of US consumers showed that for 42% early afternoon (between 13:00 and 15:00 is the preferred time to sit at the table and start to dig in). But 16:00 seems to be the “correct time” — The Atlantic explains why.

Cloudflare Radar shows that Internet traffic in the US increased this past seven days, compared with the previous period, and that makes sense given that it’s traditionally a good week for Continue reading

Heavy Networking 608: Everything You Ever Wanted To Know About NAC (And Then Some)

Today's Heavy Networking goes deep on Network Access Control (NAC) for wired and wireless networks. Our guest is Arne Bier, a Senior Consulting Engineer and CCIE. We hit a bunch of topics including MAC authentication bypass, client certificates, EAP methods, and more. We also discuss reasons why NAC is worth deploying despite the effort.

The post Heavy Networking 608: Everything You Ever Wanted To Know About NAC (And Then Some) appeared first on Packet Pushers.