Astro is joining Cloudflare

The Astro Technology Company, creators of the Astro web framework, is joining Cloudflare.

Astro is the web framework for building fast, content-driven websites. Over the past few years, we’ve seen an incredibly diverse range of developers and companies use Astro to build for the web. This ranges from established brands like Porsche and IKEA, to fast-growing AI companies like Opencode and OpenAI. Platforms that are built on Cloudflare, like Webflow Cloud and Wix Vibe, have chosen Astro to power the websites their customers build and deploy to their own platforms. At Cloudflare, we use Astro, too — for our developer docs, website, landing pages, and more. Astro is used almost everywhere there is content on the Internet.

By joining forces with the Astro team, we are doubling down on making Astro the best framework for content-driven websites for many years to come. The best version of Astro — Astro 6 — is just around the corner, bringing a redesigned development server powered by Vite. The first public beta release of Astro 6 is now available, with GA coming in the weeks ahead.

We are excited to share this news and even more thrilled for what Continue reading

Infrahub with Damien Garros

Why do we need Infrahub, another network automation tool? What does it bring to the table, who should be using it, and why is it using a graph database internally?

I discussed these questions with Damien Garros, the driving force behind Infrahub, the founder of OpsMill (the company developing it), and a speaker in the ipSpace.net Network Automation course.

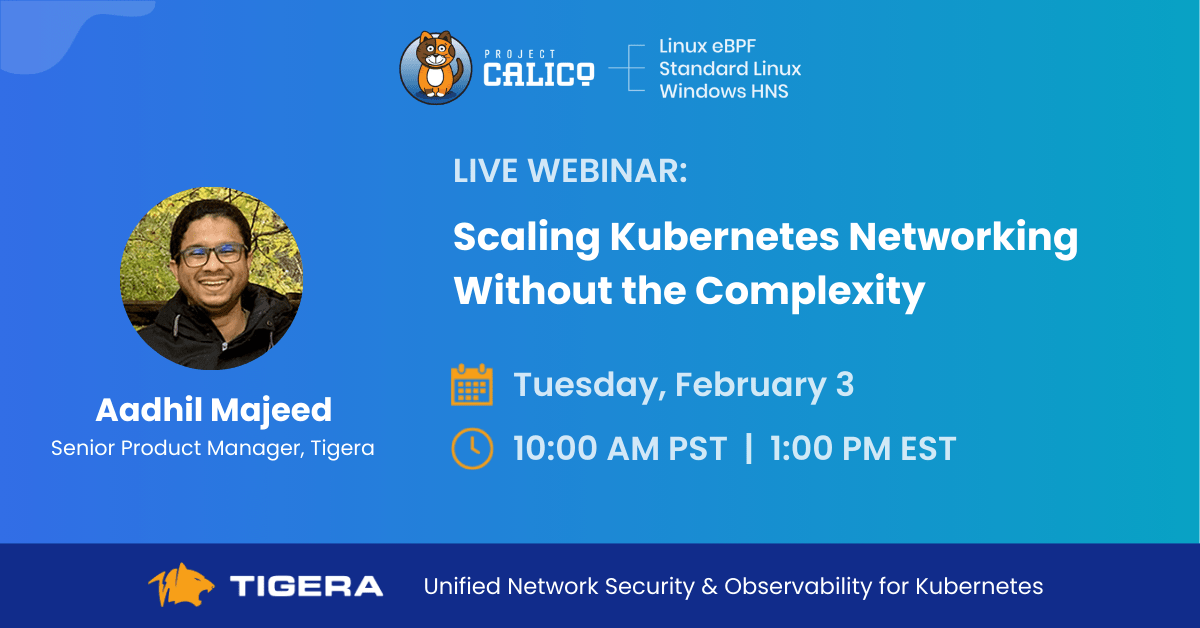

Kubernetes Networking at Scale: From Tool Sprawl to a Unified Solution

As Kubernetes platforms scale, one part of the system consistently resists standardization and predictability: networking. While compute and storage have largely matured into predictable, operationally stable subsystems, networking remains a primary source of complexity and operational risk

This complexity is not the result of missing features or immature technology. Instead, it stems from how Kubernetes networking capabilities have evolved as a collection of independently delivered components rather than as a cohesive system. As organizations continue to scale Kubernetes across hybrid and multi-environment deployments, this fragmentation increasingly limits agility, reliability, and security.

This post explores how Kubernetes networking arrived at this point, why hybrid environments amplify its operational challenges, and why the industry is moving toward more integrated solutions that bring connectivity, security, and observability into a single operational experience.

The Components of Kubernetes Networking

Kubernetes networking was designed to be flexible and extensible. Rather than prescribing a single implementation, Kubernetes defined a set of primitives and left key responsibilities such as pod connectivity, IP allocation, and policy enforcement to the ecosystem. Over time, these responsibilities were addressed by a growing set of specialized components, each focused on a narrow slice of Continue reading

LIU006: Stay Technical While Expanding Your Influence

Is it possible for IT professionals to remain technical when moving into roles that expand influence, scale, and reach? Matt Starling, Senior Director of Product Marketing at Ekahau and co-founder of the WiFi Ninjas podcast, joins Alexis and Kevin to share how your career can evolve beyond on-call operations without losing the technical core. His... Read more »Human Native is joining Cloudflare

Today, we’re excited to share that Cloudflare has acquired Human Native, a UK-based AI data marketplace specializing in transforming multimedia content into searchable and useful data.

The Human Native team has spent the past few years focused on helping AI developers create better AI through licensed data. Their technology helps publishers and developers turn messy, unstructured content into something that can be understood, licensed and ultimately valued. They have approached data not as something to be scraped, but as an asset class that deserves structure, transparency and respect.

Access to high-quality data can lead to better technical performance. One of Human Native’s customers, a prominent UK video AI company, threw away their existing training data after achieving superior results with data sourced through Human Native. Going forward they are only training on fully licensed, reputably sourced, high-quality content.

This gives a preview of what the economic model of the Internet can be in the age of generative AI: better AI built on better data, with fair control, compensation and credit for creators.

For the last 30 years, the open Internet has been based on a fundamental value exchange: creators Continue reading

Using netlab to Set Up Demos

David Gee was time-pressed to set up a demo network to showcase his network automation solution and found that a Ubuntu VM running netlab to orchestrate Arista cEOS containers on his Apple Silicon laptop was exactly what he needed.

I fixed a few blog posts based on his feedback (I can’t tell you how much I appreciate receiving a detailed “you should fix this stuff” message, and how rare it is, so thanks a million!), and David was kind enough to add a delightful cherry on top of that cake with this wonderful blurb:

Netlab has been a lifesaver. Ivan’s entire approach, from the software to collecting instructions and providing a meaningful information trail, enabled me to go from zero to having a functional lab in minutes. It has been an absolute lifesaver.

I can be lazy with the infrastructure side, because he’s done all of the hard work. Now I get to concentrate on the value-added functionality of my own systems and test with the full power of an automated and modern network lab. Game-changing.

NAN110: Bridging Industry, Academia, and the Future of Network Automation with Dr. Levi Perigo

Eric Chou is joined by Dr. Levi Perigo, Scholar in Residence and Professor of Network Engineering at the University of Colorado, Boulder. They discuss Levi’s non-traditional career path from being in the network automation industry for 20 years before shifting to academia and co-founding QuivAR. Levi also dives into the success of the CU Boulder... Read more »TCG066: How Infrastructure Teams Can Scale Reasoning Without Losing Control with Chris Wade

The industry has pivoted from scripting to automation to orchestration – and now to systems that can reason. Today we explore what AI agents mean for infrastructure with Chris Wade, Co-Founder and CTO of Itential. We also dive into the brownfield reality, the potential for vendor-specific LLMs trained on proprietary knowledge, and advice for the... Read more »Do You Need IS-IS Areas?

TL&DR: Most probably not, but if you do, you’d better not rely on random blogs for professional advice #justSaying 😜

Here’s an interesting question I got from a reader in the midst of an OSPF-to-IS-IS migration:

Why should one bother with different [IS-IS] areas when the routing hierarchy is induced by the two levels and the appropriate IS-IS circuit types on the links between the routers?

Well, if you think you need a routing hierarchy, you’re bound to use IS-IS areas because that’s how the routing hierarchy is implemented in IS-IS. However…

What came first: the CNAME or the A record?

On January 8, 2026, a routine update to 1.1.1.1 aimed at reducing memory usage accidentally triggered a wave of DNS resolution failures for users across the Internet. The root cause wasn't an attack or an outage, but a subtle shift in the order of records within our DNS responses.

While most modern software treats the order of records in DNS responses as irrelevant, we discovered that some implementations expect CNAME records to appear before everything else. When that order changed, resolution started failing. This post explores the code change that caused the shift, why it broke specific DNS clients, and the 40-year-old protocol ambiguity that makes the "correct" order of a DNS response difficult to define.

All timestamps referenced are in Coordinated Universal Time (UTC).

Time | Description |

|---|---|

2025-12-02 | The record reordering is introduced to the 1.1.1.1 codebase |

2025-12-10 | The change is released to our testing environment |

2026-01-07 23:48 | A global release containing the change starts |

2026-01-08 17:40 | The release reaches 90% of servers |

2026-01-08 18:19 | Incident is declared |

2026-01-08 18:27 | The release is reverted |

2026-01-08 19:55 | Revert is completed. Impact ends |

While making some improvements to lower the memory usage of Continue reading

By Decade’s End, AI Will Drive More Than Half Of All Chip Sales

As the year came to an end, we tore apart IDC’s assessments for server spending, including the huge jump in accelerated supercomputers for running GenAI and more traditional machine learning workloads and as this year got started, we did forensic analysis and modeling based on the company’s reckoning of Ethernet switching and routing revenues. …

By Decade’s End, AI Will Drive More Than Half Of All Chip Sales was written by Timothy Prickett Morgan at The Next Platform.

From IPVS to NFTables: A Migration Guide for Kubernetes v1.35

Kubernetes Networking Is Changing

Kubernetes v1.35 marks an important turning point for cluster networking. The IPVS backend for kube-proxy has been officially deprecated, and future Kubernetes releases will remove it entirely. If your clusters still rely on IPVS, the clock is now very much ticking.

Staying on IPVS is not just a matter of running older technology. As upstream support winds down, IPVS receives less testing, fewer fixes, and less attention overall. Over time, this increases the risk of subtle breakage, makes troubleshooting harder, and limits compatibility with newer Kubernetes networking features. Eventually, upgrading Kubernetes will force a migration anyway, often at the worst possible time.

Migrating sooner gives you control over the timing, space to test properly, and a chance to avoid turning a routine upgrade into an emergency networking change.

Calico and the Path Forward

Project Calico’s unique design with a pluggable data plane architecture is what makes this transition possible without redesigning cluster networking from scratch. Calico supports a wide range of technologies, including eBPF, iptables, IPVS, Windows HNS, VPP, and nftables, allowing clusters to choose the most appropriate backend for their environment. This flexibility enables clusters to evolve alongside Kubernetes rather than being Continue reading

Exporting events to Loki

Grafana Loki is an open source log aggregation system inspired by Prometheus. While it is possible to use Loki with Grafana Alloy, a simpler approach is to send logs directly using the Loki HTTP API.The following example modifies the ddos-protect application to use sFlow-RT's httpAsync() function to send events to Loki's HTTP API.

var lokiPort = getSystemProperty("ddos_protect.loki.port") || '3100'; var lokiPush = getSystemProperty("ddos_protect.loki.push") || '/loki/api/v1/push'; var lokiHost = getSystemProperty("ddos_protect.loki.host"); function sendEvent(action,attack,target,group,protocol) { if(lokiHost) { var url = 'http://'+lokiHost+':'+lokiPort+lokiPush; lokiEvent = { streams: [ { stream: { service_name: 'ddos-protect' }, values: [[ Date.now()+'000000', action+" "+attack+" "+target+" "+group+" "+protocol, { detected_level: action == 'release' ? 'INFO' : 'WARN', action: action, attack: attack, ip: target, group: group, protocol: protocol } ]] } ] }; httpAsync({ url: url, headers: {'Content-Type':'application/json'}, operation: 'POST', body: JSON.stringify(lokiEvent), success: (response) => { if (200 != response.status) { logWarning("DDoS Loki status " + response.status); } }, error: (error) => { logWarning("DDoS Loki error " + error); } }); } if(syslogHosts.length === 0) return; var msg = {app:'ddos-protect',action:action,attack:attack,ip:target,group:group,protocol:protocol}; syslogHosts.forEach(function(host) { try { syslog(host,syslogPort,syslogFacility,syslogSeverity,msg); } catch(e) { logWarning('DDoS cannot send syslog to ' + host); } }); }Continue reading

PP092: News Roundup–Old Gear Faces New Attacks, Cyber Trust Mark’s Trust Issues, Alarms Howl for Kimwolf Botnet

Everything old is new again in this Packet Protector news roundup, from end-of-life D-Link routers facing active exploits (and no patch coming) to a five-year-old Fortinet vulnerability being freshly targeted by threat actors (despite a patch having been available for five years). We also dig into a clever, multi-stage attack against hotel operators that could... Read more »HS122: Insider Threats in the Age of AI

Leaders may shy away from thinking about insider threats because it means assuming the worst about colleagues and friends. But technology executives do need to confront this problem because insider attacks are prevalent—a recent study claims that in 2024, 83% of organizations experienced at least one—and on the rise. Moreover, AI and deepfakes vastly enhance... Read more »Ultra Ethernet: Congestion Control Context

Ultra Ethernet Transport (UET) uses a vendor-neutral, sender-specific congestion window–based congestion control mechanism together with flow-based, adjustable entropy-value (EV) load balancing to manage incast, outcast, local, link, and network congestion events. Congestion control in UET is implemented through coordinated sender-side and receiver-side functions to enforce end-to-end congestion control behavior.

On the sender side, UET relies on the Network-Signaled Congestion Control (NSCC) algorithm. Its main purpose is to regulate how quickly packets are transmitted by a Packet Delivery Context (PDC). The sender adapts its transmission window based on round-trip time (RTT) measurements and Explicit Congestion Notification (ECN) Congestion Experienced (CE) feedback conveyed through acknowledgments from the receiver.

On the receiver side, Receiver Credit-based Congestion Control (RCCC) limits incast pressure by issuing credits to senders. These credits define how much data a sender is permitted to transmit toward the receiver. The receiver also observes ECN-CE markings in incoming packets to detect path congestion. When congestion is detected, the receiver can instruct the sender to change the entropy value, allowing traffic to be steered away from congested paths.

Both sender-side and receiver-side mechanisms ultimately control congestion by limiting the amount of in-flight data, meaning data that has been sent but not yet acknowledged. Continue reading

netlab 26.01: EVPN for VXLAN-over-IPv6, Netscaler

I completely rewrote netlab’s device configuration file generation during the New Year break. netlab Release 26.01 no longer uses Ansible Jinja2 functionality and works with Ansible releases 12/13, which are used solely for configuration deployment. I had to break a few eggs to get there; if you encounter any problems, please open an issue.

Other new features include:

- EVPN for VXLAN-over-IPv6

- The ‘skip_config’ node attribute that can be used to deploy partially-provisioned labs

- Lightweight netlab API HTTP server by Craig Johnson

- Rudimentary support for Citrix Netscaler by Seb d’Argoeuves

You’ll find more details (and goodies) in the release notes.

What we know about Iran’s Internet shutdown

In late December 2025, wide-scale protests erupted across multiple cities in Iran. While these protests were initially fueled by frustration over inflation, food prices, and currency depreciation, they have grown into demonstrations demanding a change in the country’s leadership regime.

In the last few days, Internet traffic from Iran has effectively dropped to zero. This is evident in the data available in Cloudflare Radar, as we’ll describe in this post.

The Iranian government has a history of cutting off Internet connectivity when such protests take place. In November 2019, protests erupted following the announcement of a significant increase in fuel prices. In response, the Iranian government implemented an Internet shutdown for more than five days. In September 2022, protests and demonstrations erupted across Iran in response to the death in police custody of Mahsa/Zhina Amini, a 22-year-old woman from the Kurdistan Province of Iran. Internet services were disrupted across multiple network providers in the following days.

Amid the current protests, lower traffic volumes were already observed at the start of the year, indicating potential connectivity issues leading into the more dramatic shutdown that has followed.

Some traffic anomalies Continue reading